Media Authenticity Explained: Spot Real vs Fake Content

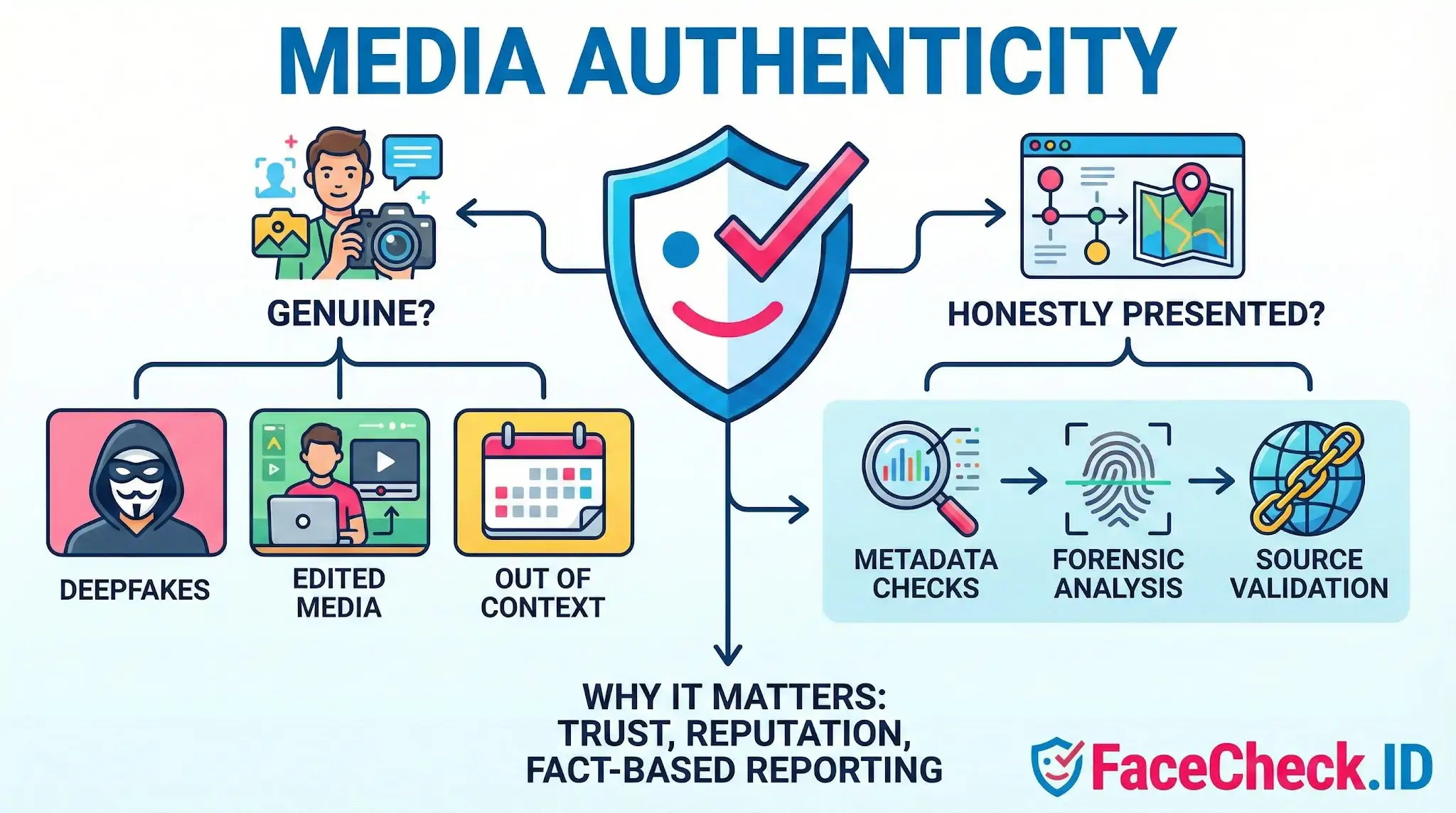

Media authenticity means that a piece of media, like a photo, video, audio clip, or document, is real, accurate, and trustworthy. Authentic media reflects what it claims to show, without deceptive edits, misleading context, or false attribution.

Media authenticity focuses on two core questions:

- Is the media genuine? Was it created by the stated source or captured from the real event?

- Is the media presented honestly? Is it shown with correct context, timing, and meaning?

Why media authenticity matters

Media authenticity helps people and organizations:

- Prevent misinformation and manipulation

- Protect brand reputation and public trust

- Support reliable journalism and fact based reporting

- Reduce fraud in advertising, e-commerce, and customer support

- Meet compliance needs in sensitive industries like finance, healthcare, and government

With AI generated content and deepfakes becoming common, verifying authenticity is now a standard requirement for many workflows.

Common threats to media authenticity

Media can lose authenticity in several ways, including:

- Deepfakes and synthetic media that imitate real people or events

- Edited media that changes meaning, like removing elements or altering audio

- Out of context media such as an old video reposted as new

- Misattribution where the creator, location, or source is falsely claimed

- Compression and reposting artifacts that hide traces of manipulation, making review harder

How media authenticity is assessed

Verification often combines technical checks with human review. Common methods include:

Metadata and provenance checks

Reviewing embedded data such as time, device, location, and file history. Some systems also track provenance, meaning a record of where the media came from and how it changed over time.

Forensic analysis

Looking for signs of tampering, such as:

- unusual lighting or shadows

- inconsistent reflections

- audio glitches or unnatural speech patterns

- pixel level anomalies from editing tools

Source and context validation

Confirming the original uploader, comparing against trusted sources, and matching the media to known events, locations, and timelines.

Cryptographic signing and content credentials

Some media can be signed at capture or export so changes are detectable. Content credentials can help viewers see creation and editing history when supported by platforms and tools.

Media authenticity vs related concepts

- Authenticity vs accuracy: Authentic media can still be inaccurate if it is real but misinterpreted or incomplete.

- Authenticity vs integrity: Integrity focuses on whether the file was altered, while authenticity also includes correct attribution and context.

- Authenticity vs originality: A copy can be authentic if it is a faithful reproduction with clear sourcing.

Examples of media authenticity

- A smartphone video with verified location and timestamp that matches eyewitness reports is likely authentic.

- A photo that is real but reposted with the wrong date or event description is not authentically presented.

- A voice recording generated by AI to imitate a CEO is inauthentic, even if it sounds convincing.

FAQ

What does “Media Authenticity” mean in the context of face recognition search engines?

Media Authenticity refers to whether the photo or video frame you search (and the matching results you review) are genuine, unmanipulated representations of a real person and context—rather than AI-generated, face-swapped, heavily edited, mislabeled, or taken from a different time/place than implied. In face recognition search, authenticity is about validating both the source media and the claims people attach to it.

Why does Media Authenticity matter when using a face recognition search engine like FaceCheck.ID?

Because face search tools typically match visual facial features, not truth. A convincing deepfake, face swap, or mislabeled repost can produce highly plausible matches and lead you to the wrong conclusion about who is shown, where the image came from, or what it “proves.” Treat results from FaceCheck.ID (or any face search engine) as investigative leads that require independent verification of the media and the linked pages.

What are common signs that a face-search query image or result page may be inauthentic or misleading?

Common red flags include: inconsistent facial details across versions of the same image (ear shape, teeth, moles), unnatural skin texture or lighting, distorted accessories (glasses/jewelry), mismatched reflections, warped backgrounds near the face, repeated “too perfect” portraits, abrupt changes in age/appearance across posts without explanation, and pages that reuse the same face across many unrelated names or stories. Also treat screenshots, memes, and repost aggregators as higher-risk sources than original uploads.

How can I evaluate Media Authenticity after I get face recognition search results?

Use a verification workflow: (1) open multiple top results and look for an original source (earliest post, creator profile, or primary publisher); (2) compare several images of the person from independent sources, not just reposts; (3) check context signals on the page (date, location, account history, captions, and whether other photos on the account match the same person); (4) cross-check with a traditional reverse image search for near-duplicate crops and repost trails; and (5) if stakes are high, assume manipulation is possible and seek corroboration beyond imagery (e.g., official statements, direct contact, or trusted records).

Can authentic media still produce confusing face-search matches, and what should I do?

Yes. Even authentic photos can yield misleading matches due to look-alikes, low-quality images, extreme angles, makeup, lighting, or partial occlusion. If results are mixed, rerun the search with a clearer, front-facing image, avoid heavily filtered screenshots, and prioritize matches that have consistent identity signals across multiple independent pages. Use FaceCheck.ID-style similarity as a starting point, then confirm by checking whether the same identity appears consistently across different sources and dates.