AI Interview Fraud: What It Is & Warning Signs

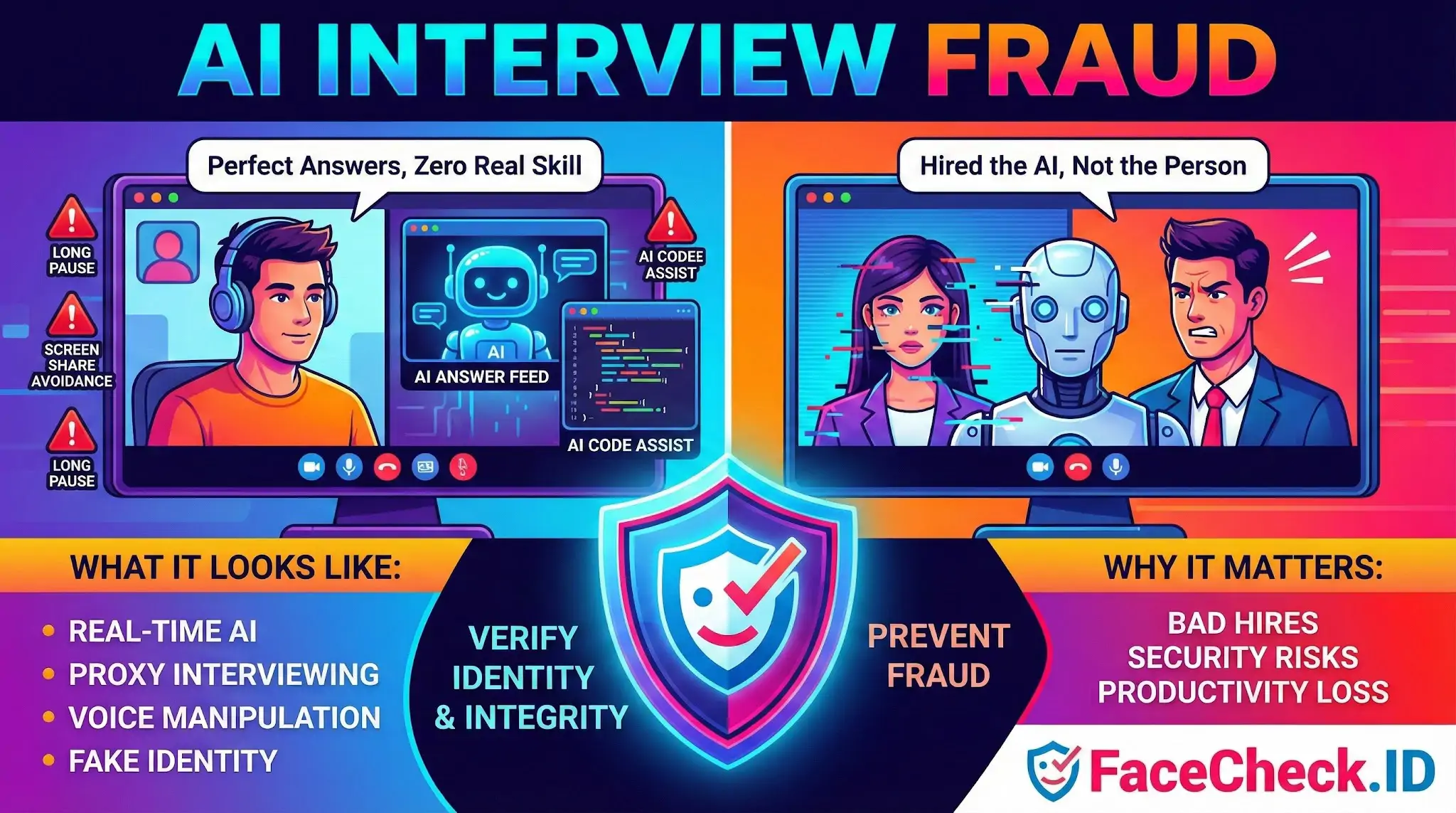

AI Interview Fraud is the act of using artificial intelligence tools to cheat during a job interview, so a candidate appears more qualified than they really are. It can happen in live video interviews, phone screens, technical interviews, and online assessments. The goal is usually to get hired by misrepresenting identity, skills, experience, or real time problem solving ability.

What it looks like in practice

AI interview fraud can take many forms, including:

- Real time answer generation: Using an AI chatbot to produce responses during behavioral, situational, or technical questions.

- Coding interview assistance: Using AI to write or fix code during a live coding task, take home test, or timed assessment.

- Hidden prompt feeds: Having interview questions transcribed or copied into an AI tool, then reading the generated answer.

- Voice and video manipulation: Using AI voice cloning, audio filters, or deepfake video to disguise the speaker or impersonate someone else.

- Proxy interviewing: Another person attends the interview while AI helps them mimic the candidate’s resume and background.

- Identity mismatches: Using AI edited documents, headshots, or profiles to support a fake identity.

- Screen sharing tricks: Avoiding screen share, using secondary devices, or positioning windows to hide AI usage.

Why it matters

AI interview fraud creates real risk for employers and teams:

- Bad hires: The person hired may not be able to do the work they claimed they could do.

- Security exposure: Fraudulent hires may gain access to systems, customer data, or proprietary code.

- Compliance issues: Regulated industries can face audit and legal problems if hiring controls are weak.

- Team productivity loss: Managers and peers spend time compensating for missing skills or retraining from scratch.

- Reputation damage: Clients and stakeholders may lose trust if performance collapses after hiring.

Common targets

AI interview fraud is most common in roles where remote interviewing is standard and skills are hard to verify quickly, such as:

- Software engineering and QA

- Data science and analytics

- DevOps and cloud roles

- Customer support and sales development

- Finance operations and back office roles

Signals that may indicate AI interview fraud

A single signal does not prove fraud, but patterns can raise concern:

- Answers sound polished but do not match follow up questions

- Long pauses followed by unusually perfect responses

- Inconsistent skill level between conversation and hands on tasks

- Candidate avoids screen sharing or insists on audio only

- Eye movement suggests reading from another screen for every question

- Sudden changes in voice clarity, tone, or background noise

- Candidate cannot explain their own past projects in detail

How organizations reduce risk

Common prevention and detection methods include:

- Identity verification: Government ID checks, liveness checks, and consistent profile validation.

- Structured interviews: Consistent questions with deeper probing to test understanding.

- Skill validation: Pair programming, live problem solving, work sample reviews, and realistic role tasks.

- Proctored assessments: Secure browsers, monitoring, and plagiarism detection for take home work.

- Tool policies: Clear rules on whether AI tools are allowed, and what is considered cheating.

- Post hire verification: Early performance checkpoints and probation period evaluations.

Ethical use vs fraud

Using AI is not always fraudulent. It becomes fraud when a candidate uses AI to misrepresent their identity or true ability in a way that violates interview rules or employer expectations. Some companies allow limited AI use, for example for accessibility support or for take home tasks, as long as it is disclosed and the candidate can explain and defend the work.

Related concepts

AI interview fraud is closely linked to hiring integrity, remote recruitment security, and AI assisted cheating. It overlaps with identity fraud, deepfake scams, and assessment plagiarism.

FAQ

What is “AI Interview Fraud” in the context of face recognition search engines?

“AI Interview Fraud” typically refers to using AI (e.g., deepfakes, face-swaps, or synthetic personas) to misrepresent who is attending a video interview. Face recognition search engines can be used as a due-diligence step to check whether a candidate’s face appears online under multiple conflicting identities or in known impersonation contexts—but results should be treated as investigative leads, not proof.

How can face recognition search engines help detect possible AI Interview Fraud without proving it?

They can help you look for signals of photo reuse or identity inconsistency—such as the same face appearing across many unrelated profiles, different names, or suspiciously repetitive “stock-like” headshots. If you see mismatches, follow up with safer verification steps (e.g., confirm the candidate controls their claimed accounts, use secure identity verification processes, and document findings carefully).

What face-search result patterns are common red flags for AI Interview Fraud (without assuming guilt)?

Common higher-risk patterns include: (1) one face tied to multiple names/regions/job histories, (2) the face showing up on many newly created or low-trust sites, (3) matches that look “near-identical” but come from heavily edited or AI-enhanced images, and (4) clusters of profiles that reuse the same photos with small crops/filters. Any single pattern can have innocent explanations, so corroborate before acting.

If a candidate might be using a deepfake or face-swap, what images should I use for a face recognition search?

Use multiple clear frames: a straight-on neutral face, a frame with different expression/angle, and (if available) a higher-resolution still from a well-lit moment. Avoid extreme motion blur, heavy compression, strong beauty filters, or wide-angle distortion. Comparing results across several frames can reduce overreliance on a single misleading screenshot.

How can FaceCheck.ID add value when investigating AI Interview Fraud, and what precautions should I take?

FaceCheck.ID (and similar tools) may help you quickly surface where a face appears on the open web, which can support impersonation checks (e.g., seeing the same face attached to different identities). Precautions: only upload images you have a lawful basis to process, minimize personal data exposure, treat results as non-definitive, and avoid making adverse decisions based solely on face-search results—use them to trigger additional verification steps instead.