Voice Deepfake Explained: Risks, Uses & Detection

Definition

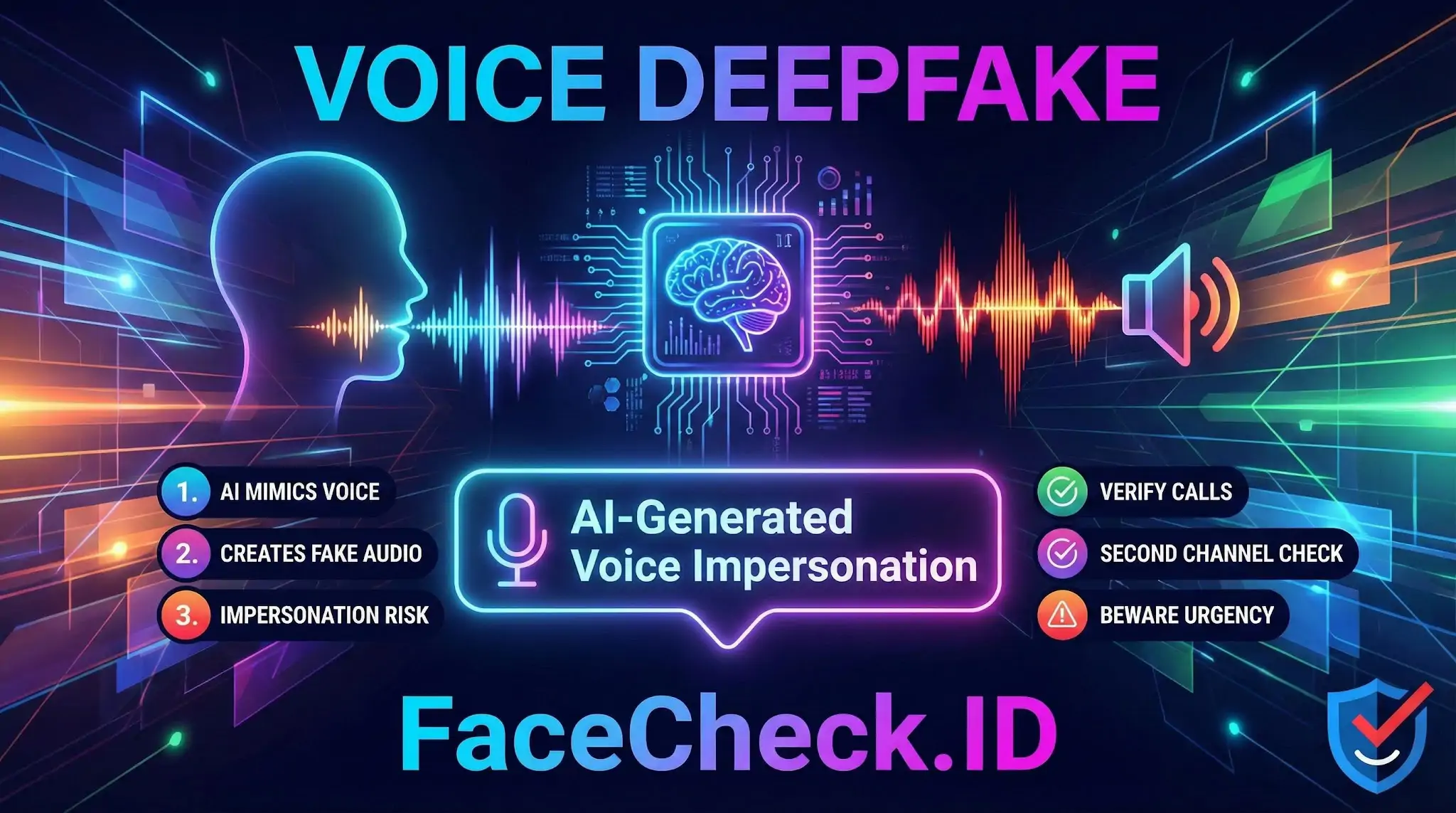

A voice deepfake is a computer generated imitation of a real person’s voice. It uses artificial intelligence to copy how someone sounds, including tone, accent, pitch, and speaking style, so the audio can convincingly say words the person never actually said.

How Voice Deepfakes Work

Voice deepfakes are typically created with a voice cloning model trained on audio recordings of a target speaker. After training, the system can synthesize new speech by generating audio that matches the target voice.

Common techniques include:

- Text to speech voice cloning, where typed text is converted into speech in the cloned voice

- Voice conversion, where one person’s voice is transformed to sound like another person while keeping the original wording and timing

- Generative deep learning models that learn speaker characteristics from short or long audio samples

Voice Deepfake vs Audio Editing

A voice deepfake is different from normal audio editing.

- Audio editing rearranges or cleans real recordings

- A voice deepfake produces new speech that may never have been recorded at all

- Deepfakes can be built from small samples, making them harder to spot than simple cut and paste edits

Common Uses

Voice deepfakes can be used for legitimate purposes, but they are also widely associated with fraud and misinformation.

Legitimate uses:

- Film, TV, and game production, such as dubbing or voice replacement

- Accessibility tools and personalized text to speech voices

- Restoring voices for people who lost the ability to speak, when created with consent

Harmful uses:

- Impersonation scams targeting employees, customers, or family members

- Fake evidence or misleading audio shared online

- Harassment, reputational damage, and identity abuse

Risks and Security Concerns

Voice deepfakes can be used to bypass trust based checks, especially when someone relies on voice recognition or informal verbal confirmation.

Key risks include:

- Social engineering attacks, such as fake calls from a boss or vendor requesting urgent payments

- Account takeover attempts when voice is used as a biometric factor

- Brand impersonation and fake customer support calls

- Misinformation campaigns using realistic sounding clips

How to Detect a Voice Deepfake

Detection is improving, but high quality deepfakes can be very convincing. Warning signs can include:

- Unnatural pacing, robotic rhythm, or odd emphasis on words

- Inconsistent background noise or room acoustics across a clip

- Missing breathing sounds or overly clean audio

- Strange pronunciation of names or uncommon words

- Requests that create urgency, secrecy, or pressure during a call

Because audio cues are not always reliable, verification steps matter more than listening for artifacts.

How to Protect Yourself and Your Organization

Practical safeguards include:

- Verify identity with a second channel, like a text message to a known number or an internal chat account

- Use approval workflows for payments and sensitive actions, not single person voice confirmations

- Set a code word or call back policy for high risk requests

- Limit how much high quality voice content you publish publicly when possible

- Train teams on impersonation scams that use AI generated audio

Legal and Ethical Considerations

Creating a voice deepfake without permission can violate privacy, publicity rights, fraud laws, and platform policies, depending on the jurisdiction and the intent. Even when legal, ethical best practice requires clear consent, disclosure, and safeguards against misuse.

FAQ

What is a “Voice Deepfake” and how does it relate to face recognition search engines?

A Voice Deepfake is synthetic or altered audio (often created with AI) that imitates a real person’s voice. Face recognition search engines don’t match voices directly, but a voice deepfake often appears alongside video or profile content that includes a face—so face search can help you trace where the associated face images appear online and whether the media is tied to consistent sources or multiple identities.

Can a voice deepfake be used to “fool” a face recognition search engine into finding the wrong person?

Not by the audio alone—face recognition search engines generally rely on face images (photos, screenshots, video frames). However, voice-deepfake scams frequently reuse stolen face photos or combine a real person’s face with manipulated media. If you upload a frame from a deepfake video or a heavily edited image, the search engine may return misleading matches (wrong-person results) because the face imagery itself may be synthetic, altered, or taken from someone else.

If I only have a phone call or voice note, can a face recognition search engine help identify the caller?

Not directly. Face recognition search requires a face image, not an audio clip. A practical approach is to look for any related visual artifacts (the profile photo used with the account, a video call screenshot, a shared selfie, or a video frame) and then run face search on that image to find where the same face appears online.

What image should I upload for best results if the situation involves a suspected voice deepfake (e.g., a scam call with a profile photo)?

Use the clearest, most front-facing still image of the person’s face that you can legally obtain (for example: the account’s profile photo, a clean screenshot from a video call, or a sharp video frame). Avoid images with heavy filters, strong compression, extreme angles, or large occlusions, because those can increase look-alike matches and make results harder to interpret.

How can FaceCheck.ID add value when investigating a possible Voice Deepfake scenario?

FaceCheck.ID (like other face recognition search tools) can help you check whether a face photo used in a voice-deepfake context appears across multiple sites, usernames, or storylines—patterns that may suggest photo reuse, impersonation, or a mixed identity trail. Treat matches as investigative leads (not proof of identity), and cross-check the linked pages, dates, and context before making any accusation or decision.