AI-Generated Content in Face Search

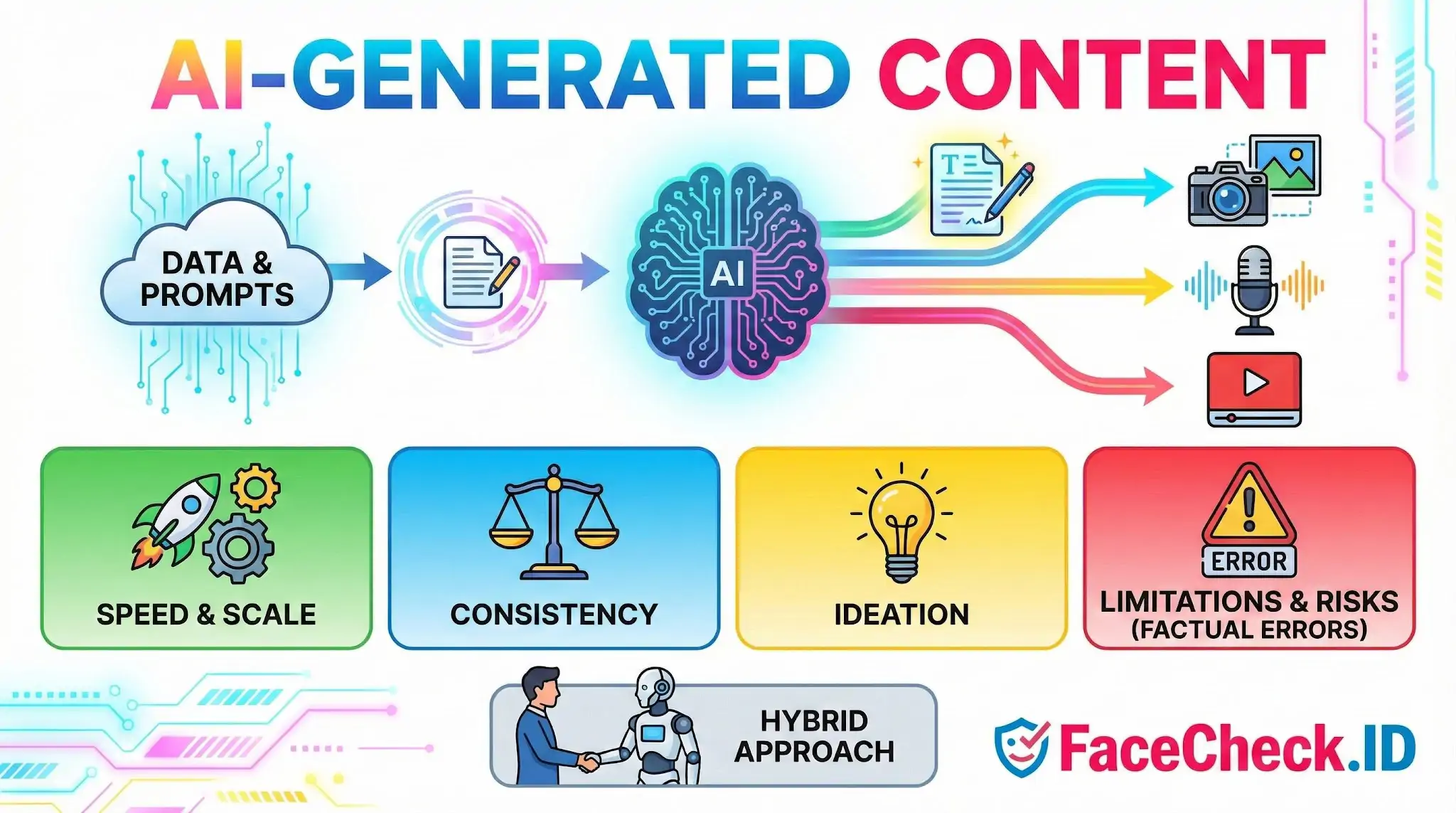

AI-generated content has reshaped what shows up in face-search results. Synthetic photos, deepfake profile pictures, and machine-written bios now appear alongside real images on dating sites, social profiles, and scam pages, which directly affects how face-recognition tools and the people reading their results interpret a match.

How AI-generated images change face search

A reverse face search works by finding pages where a face resembling the query image appears. When the indexed image is AI-generated, the underlying identity may not exist at all. Diffusion models and GAN-based generators (the older "this person does not exist" style outputs and newer diffusion variants) can produce faces that look photographically real but belong to no human being.

This matters in several ways:

- A match against a synthetic face does not point to a real person. It points to whoever published the synthetic image.

- The same generated face can be reused across dozens of fake profiles, so a single AI portrait may appear on a dating profile, a LinkedIn-style page, a crypto promotion, and a romance scam thread at once.

- Generated faces often have subtle artifacts: mismatched earrings, irregular pupils, soft hairlines, distorted backgrounds, or asymmetric glasses. Face-recognition embeddings ignore most of these, so the model can still produce a confident similarity score against a real person who happens to look like the synthetic output.

When an investigator sees a face-search hit, the question is no longer only "who is this?" but "is this image of a real person at all?"

Synthetic profiles, catfishing, and scam patterns

Romance scammers, recruitment scams, and investment fraud rings have shifted from stealing real photos of unrelated people to generating new faces on demand. AI-generated headshots are attractive to scammers because they cannot be reverse-searched back to a real owner who might complain. A victim who runs the scammer's photo through a face search may find only the scam-related pages where that synthetic face appears, which is itself a strong signal.

Patterns worth noting:

- A face that appears on multiple unrelated profiles using different names, ages, or countries.

- Profile photos with the polished, centered, slightly soft look characteristic of generator outputs.

- Matches that cluster only on dating apps, Telegram channels, or low-reputation sites with no older history, no tagged group photos, and no candid shots.

- Bios that read as machine-written: vague job titles, generic hobbies, and travel claims without specific places.

A real person tends to leave a longer trail: tagged photos from years ago, group shots at events, profile pictures on professional sites, and image variations across angles and lighting. Synthetic identities rarely produce that depth.

Detecting AI-generated faces during a search

No detector is perfect, but several signals help when reviewing face-search results:

- Look at the eyes and teeth at full resolution. Generators still produce inconsistencies there.

- Check accessories and backgrounds. Earrings, collars, and text in the background often warp.

- Search for the same face across more results. A real person usually appears in candid, multi-angle, multi-year photos. A generated face usually appears as a single hero shot or a small set of similar portraits.

- Cross-reference any claimed identity. If the matched pages give a name, search that name independently and see whether the same face shows up in older, verifiable contexts.

Face-recognition similarity scores treat synthetic faces the same as real ones, so a high match score against an AI image is not a guarantee that the underlying person is real. Treat the score as a question, not an answer.

What AI-generated content does not prove

A face-search hit on AI-generated material does not confirm that anyone in particular is behind the account. It only confirms where the synthetic image was published. Conversely, the absence of matches does not mean an image is generated. Many real people have minimal online footprints, and many real photos are simply not indexed.

The useful posture is skeptical but specific: AI-generated content increases the chance that a profile is fake, that a photo has no real owner, and that a confident similarity score is pointing at fabricated source material. Confirming a real identity still requires corroborating evidence outside the face match itself, including consistent history, named references, and contextual photos that a generator would not have produced.

FAQ

What is “AI-Generated Content” in the context of face recognition search engines?

In face recognition search engines, “AI-Generated Content” usually means images (or parts of images) created or heavily altered by AI—such as synthetic faces, face swaps, or AI-enhanced portraits. This matters because a synthetic or edited face can look realistic but may not correspond to a real person or a consistent online identity trail.

How can AI-generated faces affect face recognition search results?

AI-generated faces can trigger misleading matches: the search may return look-alike results (near matches) rather than the same individual, or it may link to unrelated pages that reused similar-looking synthetic imagery. AI edits (beauty filters, upscaling, reshaping) can also change key facial features enough to reduce match quality or shift results toward the wrong person.

What are common signs that an image might be AI-generated or AI-altered when reviewing face-search results?

Common red flags include unnatural skin texture, inconsistent lighting or shadows, asymmetrical earrings/glasses, warped teeth, strange hairlines, background artifacts near the face, and “too-perfect” portrait aesthetics. Also watch for inconsistent facial details across different images that supposedly show the same person (e.g., ear shape, spacing of moles, or eyebrow structure changing unusually).

How should I use face recognition search results when AI-generated content might be involved?

Treat results as leads, not proof. Cross-check multiple independent sources, look for consistent context (same name/username, timeline, location, and social graph), and prefer original uploads over reposts or compilations. If the best “matches” only appear on spammy repost sites or the images look AI-stylized, assume a higher risk of false association and avoid making identity claims based on the face match alone.

Does FaceCheck.ID help when results may include AI-generated or AI-edited faces, and what should I do with its output?

FaceCheck.ID can add value as a face-search tool for finding where a similar face appears across the web, but AI-generated or heavily edited images can still produce convincing wrong-person or near-match results. Use FaceCheck.ID output to collect candidate pages, then validate by checking page credibility, confirming consistent biographical/context clues, and comparing multiple photos from the same source before concluding two results show the same real person.