Ethical Concerns in Face Search

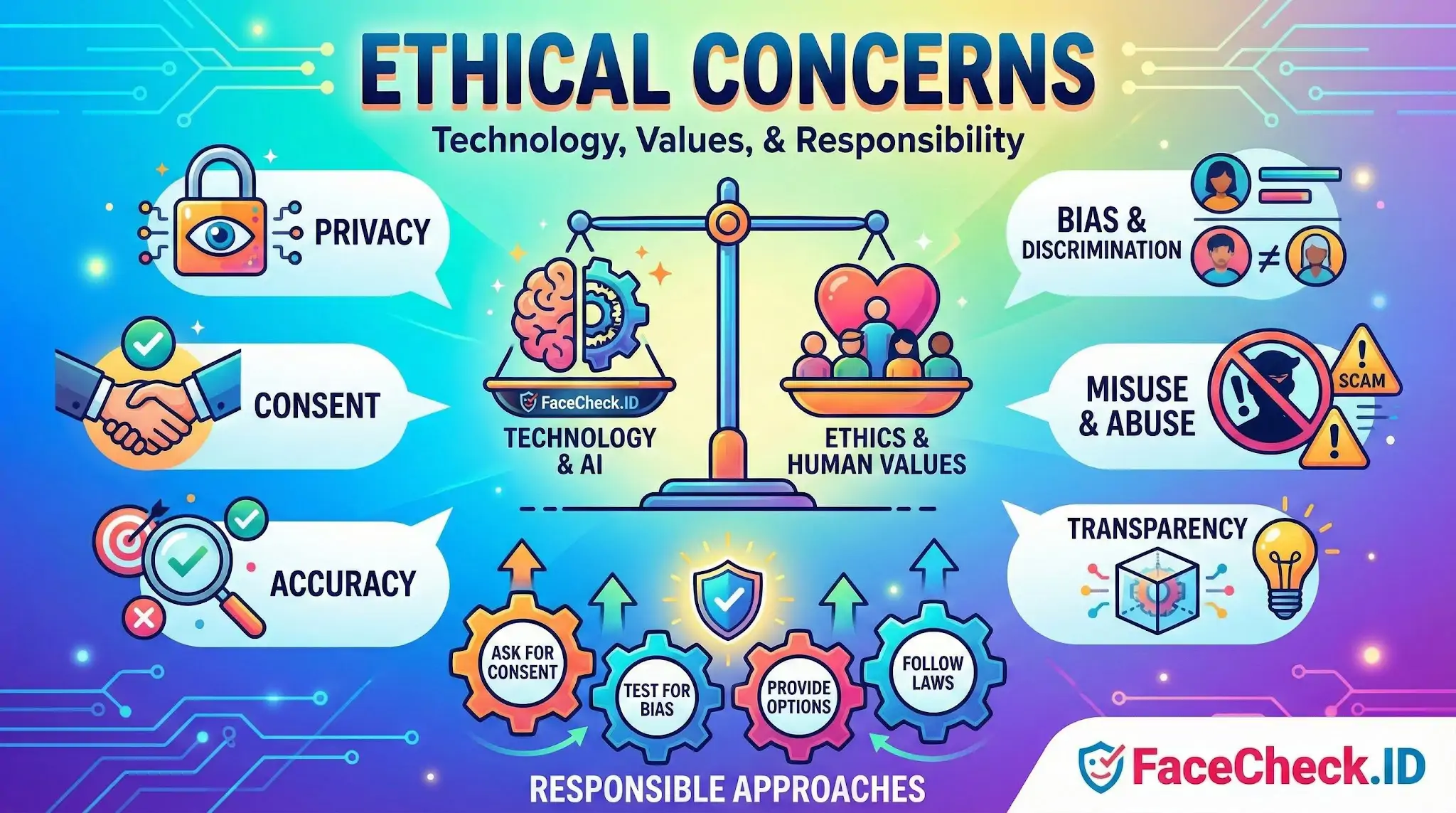

Face search sits in one of the most contested corners of consumer technology. Any tool that can take a single photo and return links to a person's social profiles, news mentions, or old forum posts raises real questions about consent, accuracy, and how the results get used once they leave the screen.

What ethical concerns look like in face search

The ethics of reverse face search are not abstract. They show up in specific decisions: whose photos get indexed, how confident a match needs to be before it is shown, what kinds of sites appear in results, and what a user is allowed to do with the information afterward.

The recurring pressure points include:

- Consent at indexing time. Most people whose faces appear in public photos never agreed to be searchable by face. The image was public, but the biometric link between face and identity is new.

- Match accuracy and false positives. A confident-looking match can still be the wrong person. Lookalikes, siblings, and people who share facial structure regularly trip up even strong models.

- Demographic performance gaps. Recognition systems historically perform unevenly across skin tones, ages, and genders. A result that is reliable for one group may be noisier for another.

- Downstream misuse. Even an accurate match can cause harm if used for stalking, harassment, doxxing an anonymous account, or outing someone whose offline and online identities are deliberately separate.

- Context collapse. Pulling a face from a dating profile and matching it to a LinkedIn page, a mugshot site, and an old forum post compresses contexts the person never intended to merge.

Where the line between legitimate use and harm gets blurry

Some uses of face search are clearly defensible. Verifying that the person you have been talking to on a dating app is who they claim to be. Checking whether a stranger asking for money is using a stolen photo. Looking up images of yourself to see what is publicly indexed under your face. Investigators and journalists checking the identity of someone implicated in fraud or abuse.

Other uses are clearly not. Identifying random strangers in public. Building a dossier on a romantic interest who has not consented to that level of scrutiny. Tracking down a sex worker, an activist, or a domestic abuse survivor who deliberately keeps online accounts separate. Using a search result as proof of guilt rather than a starting point for further checking.

A lot of real-world use sits in between, and the ethics depend less on the tool than on the searcher's intent and what they do with the result. A face match is a pointer. Treating it as a verdict is where harm tends to start.

What a face match does not prove

A high-confidence match means a face-recognition model thinks two images show the same person. It does not prove:

- That the person in the linked profile uploaded the photo themselves

- That the account is currently active or controlled by that person

- That a name attached to one image is the person's real legal name

- That the person in the photo did anything described on the page where the photo appears

- That two profiles with matching faces belong to the same individual rather than reused or stolen images

Photos get scraped, reposted, repurposed for catfishing, and pasted into scam templates. A reverse face search surfaces visual similarity. Whether that similarity reflects the same person, a stolen image, a relative, or a coincidental lookalike still requires human judgment, corroborating details, and sometimes direct contact with the person involved.

Reducing ethical risk in practice

Responsible use of face search means treating results as leads rather than conclusions, refusing to publish someone's identity based on a match alone, respecting takedown and opt-out mechanisms, and recognizing that the same tool that protects someone from a romance scam can be turned against a stranger who simply did not want to be found. The technology itself is neutral on intent. The ethics live in how the search is conducted and what happens after the results load.

FAQ

What are the main ethical concerns with face recognition search engines?

Key ethical concerns include privacy intrusion (people being searchable without consent), increased risk of stalking/harassment, chilling effects on free expression and anonymity, secondary use of images beyond their original context, and the potential normalization of mass identification in public life.

How can face recognition search engines enable harassment, doxxing, or stalking?

By turning a face photo into a lookup key, these tools can help an abuser connect a person’s image to usernames, profiles, reposts, locations, or other identifying pages. Even when results are imperfect, they can provide leads that an attacker can combine with other data to target someone.

What ethical issues come from bias and unequal accuracy across different demographic groups?

If accuracy differs by demographic group, the harms are not evenly distributed: some people may be more likely to be falsely linked to another person or content. Ethically, this raises fairness concerns and increases the risk of discrimination when results are used to make decisions or accusations.

What consent and “reasonable expectation of privacy” issues arise when searching faces on the open web?

Even if an image is publicly reachable, the person pictured may not have consented to biometric-style indexing or face-based discovery. Ethical concerns include whether the subject understood the future reach of the image, whether minors or vulnerable people are involved, and whether face search changes the practical privacy people expected when a photo was posted.

If I use a tool like FaceCheck.ID, what ethical safeguards should I apply before acting on results?

Treat results as leads, not proof; verify with multiple independent signals (context, timestamps, original sources) and avoid public accusations. Use the minimum necessary image (avoid unnecessary bystanders/minors), don’t use results for harassment or discrimination, and follow removal/opt-out processes when you discover misuse or mislinking. If the stakes are high (employment, safety, legal claims), do not rely on a face-search result alone.

Recommended Posts Related to ethical-concerns

-

How to Reverse Image Search Mugshots

Ethical Concerns. While FaceCheck.ID is a powerful tool for promoting safety and transparency, it is essential to consider privacy and ethical concerns. Biases in algorithms: Biases in the data used to train facial recognition algorithms may result in unfair treatment of certain groups, leading to potential ethical concerns.