Image Authenticity

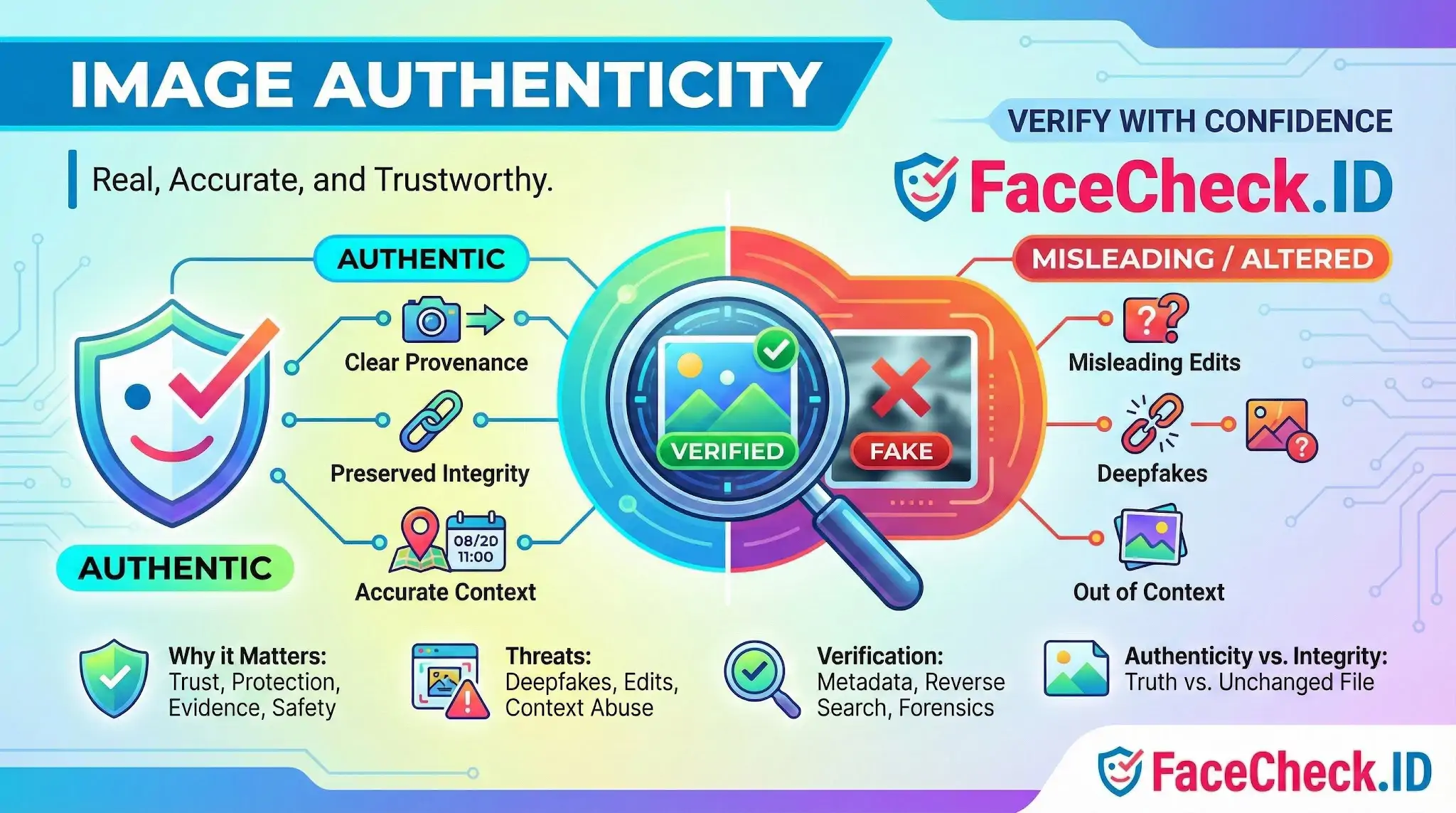

When a face-search result returns a photo, the next question is almost always whether that photo can be trusted. Image authenticity is the layer of judgment that sits between a match and a conclusion, asking whether the picture shows a real person captured in real conditions or something staged, edited, or generated.

For anyone using FaceCheck.ID to investigate a dating profile, vet a contact, or trace where a face appears online, authenticity decides how much weight a result deserves. A confident match against a manipulated source image can lead an investigation in the wrong direction.

Why authenticity changes the meaning of a face match

Face recognition compares facial geometry. It does not, on its own, know whether the photo it found is a candid snapshot, a heavily retouched headshot, a face swap, or a fully synthetic image generated by a diffusion model. Two patterns matter most in practice:

- A match against a real photo of the right person suggests the face actually appeared on that page.

- A match against a manipulated or generated image suggests only that someone produced a picture resembling that face, which is a very different claim.

Romance scammers and fake recruiters routinely combine real face images with fabricated context. A LinkedIn-style headshot may be authentic but reused by an impersonator. A profile picture may be a GAN output that happens to look like a real person. A news photo may be genuine but captioned to imply something it does not show. Each scenario produces a match, but each carries a different meaning.

Signals that suggest an image is genuine

When reviewing a hit from a face search, several signals raise or lower confidence in the source image:

- Multiple independent appearances. The same face showing up across years, platforms, and unrelated contexts is harder to fake than a single isolated post.

- Consistent capture conditions. Real photos usually show natural variation in lighting, angle, background, and expression. A subject who appears only in flawless front-facing portraits with identical skin texture is a flag for AI generation.

- Matching context. Captions, comments, tagged friends, and event details that line up with other public information add weight.

- Intact metadata. EXIF data with plausible camera, timestamp, and lens fields is not proof, but stripped or rewritten metadata on a key image is worth noting.

- Visible imperfection. Asymmetric earrings, stray hairs, blemishes, and minor focus issues are harder to fabricate convincingly than a clean studio look.

Common authenticity failures in face-search results

- Reused profile photos. A real person's headshot lifted from a company bio and pasted onto a fake dating profile. The face is authentic, the identity claim is not.

- AI-generated portraits. Synthetic faces from tools like StyleGAN or diffusion models that produce a single image with no history elsewhere on the web.

- Deepfaked or face-swapped media. A real face mapped onto another body, common in harassment cases and fabricated explicit content.

- Out-of-context journalism photos. A genuine news image attached to a false claim about the subject's role or location.

- Edited group shots. Someone removed from or inserted into a scene to imply a relationship that did not exist.

Reverse image search is the practical companion to face search here. If a photo appears on a stock site, an older blog, or a "this person does not exist" gallery, the match's value drops sharply.

What authenticity checks cannot settle

Authenticity verification has real limits, and overconfidence is its own risk. A clean EXIF record does not prove the scene was real, only that a camera wrote a file. A C2PA signature confirms the editing chain, not the truth of the moment captured. Forensic tools that flag compression artifacts can miss modern generative images that were never compressed in suspicious ways.

A face-search match plus an authentic-looking source still does not prove identity, intent, or wrongdoing. It indicates that a face resembling the query appeared in a specific place at a specific time. Linking that appearance to a real person, a real account, or a real action requires corroboration from other sources, direct contact, or, in serious cases, professional investigation. Authenticity raises or lowers confidence. It rarely closes the question on its own.

FAQ

What does “Image Authenticity” mean in the context of face recognition search engines?

Image Authenticity refers to how trustworthy a face photo (the query image) or a matched result image is—i.e., whether it is a real, unmanipulated depiction of a real person at a real time/place, or whether it may be edited, composited, face-swapped, AI-generated, mislabeled, or taken out of context. In face recognition search, authenticity matters because the system can still match faces well even when the surrounding story, caption, or identity claim attached to the image is false.

Why does Image Authenticity matter when interpreting face-search matches?

Because a convincing face match does not guarantee the image’s context is true. An authentic-looking match can appear on repost sites, scam pages, or mislabeled profiles, and an inauthentic image (edited or AI-generated) can create a misleading “trail” across the web. Treat matches as leads: confirm authenticity and context before concluding the person did what a page claims or is the same person a profile claims.

What are practical checks to assess Image Authenticity after getting face recognition search results?

Common checks include: (1) open multiple results and compare dates, captions, and page purpose to see whether they agree; (2) look for the earliest credible source (original post, official site, or the highest-quality publisher) rather than repost aggregators; (3) compare multiple photos of the same claimed person for consistent features across angles/lighting (ears, moles, hairline, teeth); (4) inspect for manipulation signs (warping near edges, inconsistent shadows, blurry facial boundaries, odd reflections); (5) run both face-search and traditional reverse image search to see whether the exact image (or a crop) is circulating as a template or meme.

How do AI-generated or heavily edited images affect Image Authenticity and face-search reliability?

AI-generated or heavily edited faces can produce false confidence: the image may look like a real person, yet no real person exists (synthetic face), or the face may be a swap using a real person’s likeness. These scenarios can yield confusing match sets—some results may relate to the donor face, some to the target face, and some to look-alikes—so you should corroborate with independent evidence (consistent usernames, original sources, additional photos, and non-image signals) rather than relying on a single matched picture.

How can FaceCheck.ID add value when you’re evaluating Image Authenticity in face-search results?

Tools like FaceCheck.ID can help you quickly surface where a face appears across many sites, which is useful for authenticity work: you can look for the earliest/most credible occurrence, spot repeated reuse across unrelated contexts (a common scam indicator), and compare multiple independent instances of the face. However, the same caution applies: even strong matches should be treated as investigative leads, and you should verify the original source and context before drawing conclusions about identity or real-world events.

Recommended Posts Related to image-authenticity

-

Reverse Image Search FAQ: The Ultimate Guide for 2025

Verifying image authenticity. Journalists fact-checking image authenticity.

-

Can you reverse image search a person?

All-in-One Tool: Verify profiles, reconnect, and ensure image authenticity.