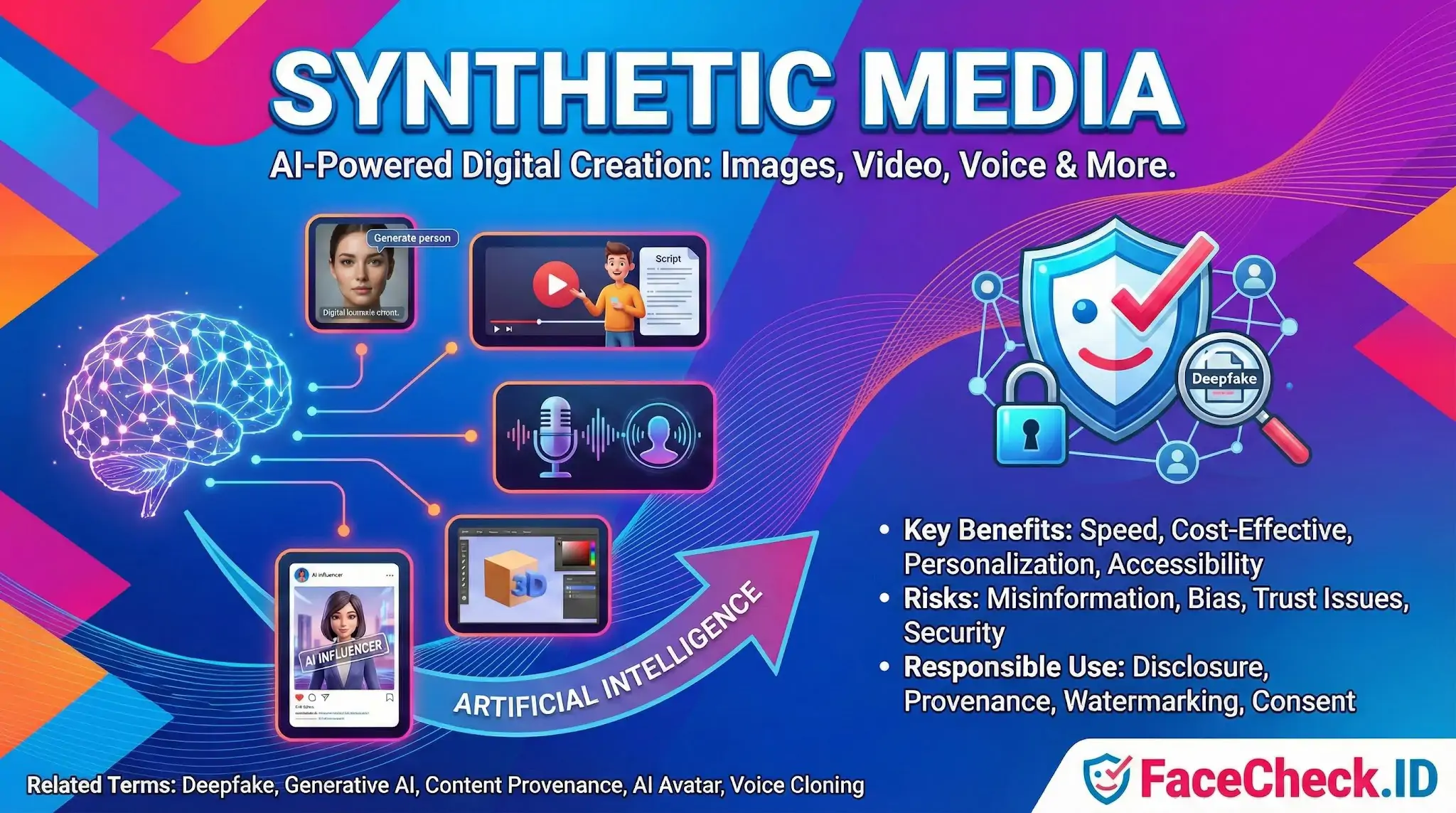

Synthetic Media

Synthetic media is any image, video, or audio that has been generated or altered by AI rather than captured from a real moment. For face search, it changes the basic question behind every match: was this photo taken of a real person at a real time, or was it produced by a model trained to look that way?

Why synthetic media complicates face-search results

A reverse face search assumes that two images showing the same face point to the same human. Synthetic media breaks that assumption in several ways. A diffusion model can produce a face that has never existed, but which still looks consistent enough across multiple images to behave like a real identity online. A GAN-generated profile picture on a dating site or LinkedIn page may match other AI outputs from the same model family because of shared statistical artifacts in skin texture, eye spacing, or background blur.

Face-swapped images are the opposite problem. The face is real, but the body, location, and context belong to someone else. A search may correctly return matches for the face while the surrounding pages describe a person the subject never met and events that never happened.

Common forms that show up in face-search results include:

- AI-generated profile photos used on fake dating, escort, or recruiter accounts

- Face swaps placed onto adult content, harassment posts, or fabricated news

- Voice-cloned video where the face footage is real but the speech is synthetic

- Upscaled or retouched photos where AI fills in detail that was never captured

- Virtual influencer content where the same synthetic face appears across hundreds of indexed pages

Signals that suggest a match may be synthetic

No detector is reliable on a single image, but certain patterns should raise suspicion when reviewing face-search hits. Repeated facial geometry across accounts that otherwise share nothing in common often points to a generator being reused. A profile that surfaces only one or two photos, all with similar lighting and pose, is more likely to be synthetic than one with years of varied images. Mismatched earrings, irregular teeth, melted jewelry, and asymmetric glasses are still common in current diffusion outputs, though they are getting rarer.

In face-swap cases, the giveaway tends to live at the boundary of the face. Look for soft seams along the jaw, color shifts between the face and neck, hair that does not behave consistently with head movement in a video, and lighting that does not match the rest of the scene. When a face-search result links to a video, scrub through frames rather than trusting a single thumbnail.

How synthetic media changes scam and impersonation investigations

Romance scams, sextortion, and fake recruiter schemes increasingly rely on synthetic faces because reusing a stolen real photo is easier to expose with a reverse face search. A scammer using a generated face will often produce a clean FaceCheck.ID result with few or no matches, simply because the face has no history on the indexed web. That absence is itself a signal. A real adult with the job title, age, and online presence the profile claims should usually leave some trace.

For face swaps used in harassment or non-consensual intimate imagery, face search can help a victim find where their real face has been pasted into fabricated content, even when the underlying scene is fake. The match is genuine even though the depicted act is not, which matters for takedown requests and reports to platforms.

What face search cannot settle on its own

A face-search result cannot tell you whether an image is synthetic. It can only tell you where similar faces appear on the indexed web. Confirming that a photo is AI-generated requires forensic tools, provenance metadata such as C2PA signatures, or context from the account itself. A clean, high-confidence match does not rule out a face swap, and a thin result set does not prove a face is fake.

Human judgment still carries the investigation. Face search narrows the field and surfaces leads. Deciding whether a face, a profile, or a video is real means weighing the matches against everything else known about the account, the platform, and the claims being made.

FAQ

What does “Synthetic Media” mean in the context of face recognition search engines?

Synthetic media refers to images, videos, or audio that are generated or significantly altered by AI (for example, GAN-generated faces, face swaps, or heavily AI-edited portraits). In face recognition search engines, synthetic media matters because it can create convincing “people” who don’t exist, or it can alter a real person’s appearance enough to change which matches appear.

How can synthetic (AI-generated) faces affect face recognition search results?

Synthetic faces can lead to confusing outcomes: (1) an AI-generated face may still match multiple real people “a little,” producing many weak look-alike hits; (2) an edited or face-swapped image of a real person may match the source person rather than the claimed identity; and (3) synthetic faces reused across sites can create the illusion of a consistent online footprint for a fake persona.

What are common signs that a face-search result might involve synthetic media rather than a real photo trail?

Common clues include: many matches with low-to-medium similarity but no strong “anchor” source; the same face appearing across unrelated accounts with different names/biographies; images that look overly airbrushed or uniform across lighting and skin texture; and profile photos that vary dramatically in style while the face stays oddly consistent. These are warning signs to verify carefully rather than conclude it’s the same person.

What should I do if I suspect an uploaded image is a deepfake or AI-edited portrait when using a face recognition search engine?

Use multiple inputs and validation steps: try a different frame/photo of the same person (preferably a natural, unfiltered image), crop tightly to the face, and compare top results for consistent non-face cues (tattoos, moles, ears, hairline, context, timestamps). Treat results as leads only, and avoid making accusations based solely on face similarity when synthetic manipulation is possible.

How does synthetic media change the way I should interpret results from tools like FaceCheck.ID?

If synthetic media is involved, treat FaceCheck.ID (or any similar tool) as a way to discover potential reuse and related appearances—not as proof of identity. Prioritize results that link to credible, original sources (not reposts), cross-check multiple photos of the same person, and assume higher false-match risk when images look AI-generated, face-swapped, or heavily filtered.

Recommended Posts Related to synthetic-media

-

Find & Remove Deepfake Porn of Yourself: Updated 2025 Guide

If an ex-partner or someone you know is posting these fakes, you can report harassment or revenge porn; many places have laws that include synthetic media; give police your evidence.