Perceptual Hash Explained: Find Near-Duplicates Fast

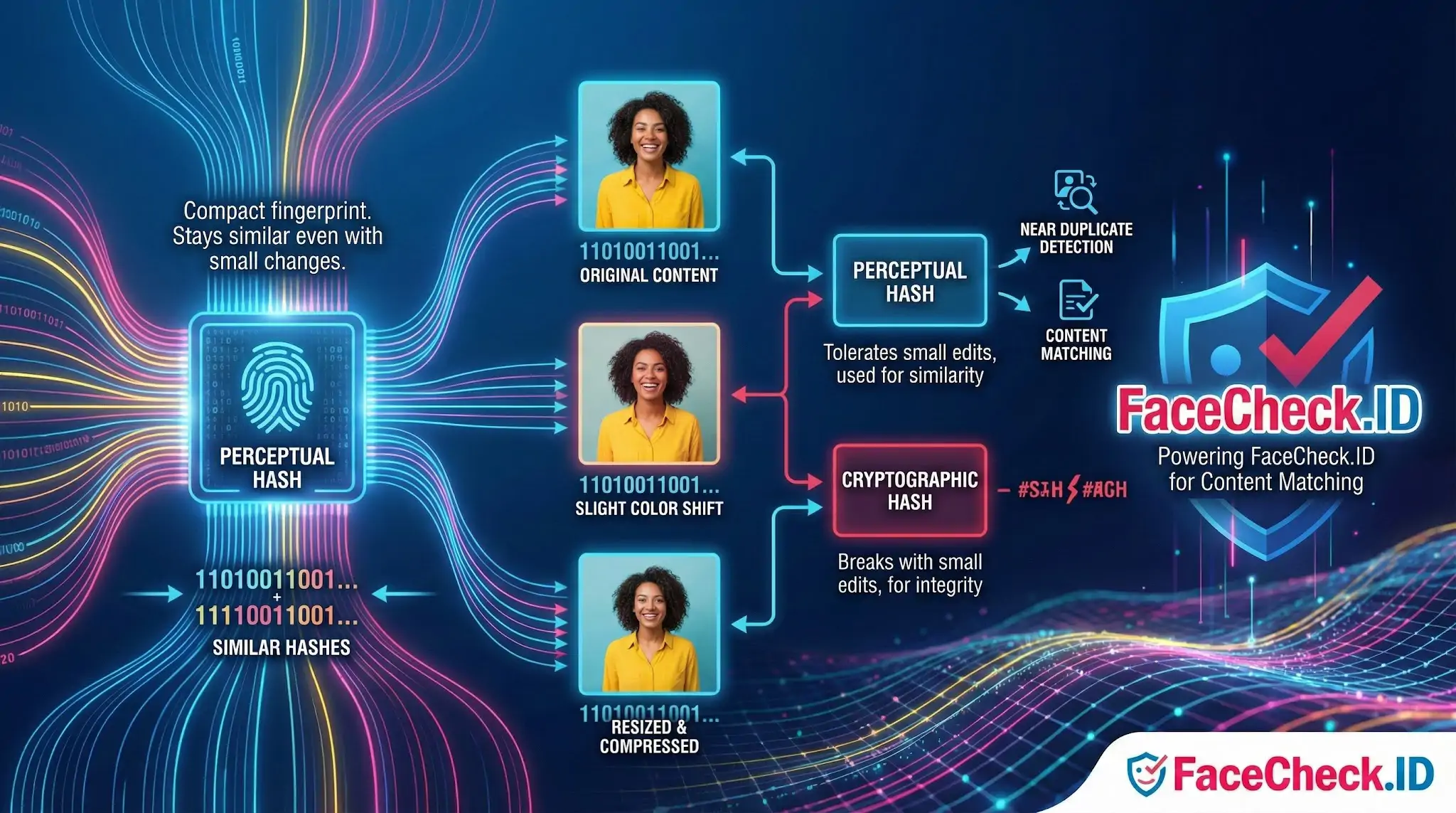

A perceptual hash is a compact fingerprint of an image, audio clip, or video frame that is designed to stay similar when the content looks or sounds similar. Unlike cryptographic hashes (like MD5 or SHA), a perceptual hash is built to tolerate small changes such as resizing, compression, minor cropping, slight color shifts, or added noise.

Perceptual hashing is widely used for near duplicate detection, content matching, and visual similarity search.

How a perceptual hash works

A perceptual hash algorithm converts media into a simplified representation and then encodes it into a short bit string, often 64 bits.

Common steps for images include:

- Normalize the input

Resize to a fixed small size and often convert to grayscale to reduce irrelevant differences.

- Extract perceptual features

Measure patterns that reflect how humans perceive structure, such as overall brightness changes, edges, and low frequency components.

- Create the hash bits

Turn those features into a binary signature, for example by comparing each value to an average or median.

- Compare hashes by distance

Two perceptual hashes are compared using Hamming distance, which counts how many bits differ. Fewer differing bits usually means more similar content.

Perceptual hash vs cryptographic hash

- Perceptual hash

Purpose: similarity matching

Small edits: produces a similar hash

Output: compared with a distance threshold

- Cryptographic hash

Purpose: integrity and security

Small edits: changes the hash completely

Output: compared for exact equality

Common types of perceptual hashing

- aHash (average hash)

Compares pixels to the average brightness. Very fast, basic accuracy.

- dHash (difference hash)

Encodes gradients by comparing adjacent pixels. Good for resized and compressed images.

- pHash (perceptual hash using DCT)

Uses a frequency transform (DCT) and focuses on low frequency components. Often more robust to compression and small edits.

- Wavelet hash

Uses wavelet transforms to capture multi scale structure. Useful for more complex similarity tasks.

Typical use cases

- Detecting duplicate and near duplicate images in a media library

- Finding reposted content even when it has been resized or recompressed

- Copyright and brand protection workflows

- Deduplicating thumbnails and product photos in ecommerce catalogs

- Similarity based clustering for organizing large datasets

- Video frame matching to detect repeated scenes or clips

Strengths and limitations

Strengths

- Works well when content has minor transformations

- Very compact representation, efficient to store and compare

- Fast matching at scale with bit operations and indexing techniques

Limitations

- Not a security feature and not collision resistant

- Large edits like heavy cropping, overlays, rotations, or major color changes can break similarity, depending on the algorithm

- Different algorithms have different sensitivity, requiring tuning of the Hamming distance threshold

- Vulnerable to intentional adversarial modifications if used for enforcement

Practical tips for using perceptual hashes

- Choose the algorithm based on expected edits. dHash and pHash are common starting points.

- Calibrate a similarity threshold with real examples from your dataset.

- Use a two step pipeline for best accuracy: perceptual hash for fast candidate filtering, then a stronger comparison method for final verification.

- Store hashes in a format that supports fast Hamming distance checks, such as 64 bit integers.

FAQ

What is a perceptual hash (pHash) in a face recognition search engine?

A perceptual hash (often called pHash) is a compact “fingerprint” of an image that stays similar when the image is resized, slightly compressed, mildly cropped, or color-adjusted. In face-recognition search pipelines, pHash is typically used for fast near-duplicate image detection (e.g., finding the same photo reuploaded in different qualities), while face embeddings handle “same person across different photos.”

How is a perceptual hash different from a face embedding used for face search?

A perceptual hash summarizes the overall visual appearance of an image (or sometimes a cropped face image) and is best at spotting near-duplicate files. A face embedding (face vector) is a biometric-style representation learned by a neural network to capture identity-related facial features, making it better for matching the same person across different photos, angles, lighting, and expressions.

What kinds of edits can break or weaken perceptual-hash matching in face-search workflows?

Perceptual hashes are usually tolerant of small changes (recompression, small resizes, slight color/contrast changes), but can fail when edits are large: heavy cropping (especially changing the face region), strong filters/beauty edits, major rotations, big overlays (text/watermarks), collages, or replacing backgrounds with aggressive AI edits. These changes can make two images of the same person look “far apart” in pHash space even if face embeddings would still match.

Why would a face recognition search engine use perceptual hashing at all if it already has face embeddings?

Perceptual hashing is computationally cheap and great for housekeeping tasks: deduplicating crawled images, grouping near-identical reposts, detecting the same photo in different sizes, and avoiding repeated processing of the same content. Face embeddings are more powerful for identity-style matching, but they’re typically more expensive to compute and compare at scale.

How might FaceCheck.ID (or similar tools) benefit from perceptual hashing in practice?

In a face-search tool, perceptual hashing can help cluster near-duplicate results (the same photo reposted across pages), reduce spammy repeats, and speed up indexing by skipping already-seen images. That can make results easier to review—while the actual “same person” matching is still primarily driven by face-recognition embeddings rather than pHash alone.

Recommended Posts Related to perceptual hash