AI-Generated Image: Spotting Synthetic Faces

Synthetic faces have changed what a reverse image search can and cannot tell you. When someone runs a photo through FaceCheck.ID, one of the first questions worth asking is whether the face in the picture belongs to a real person at all, or whether it was produced by a generative model trained to invent plausible humans.

Why AI-generated faces matter for reverse image search

A face-search engine indexes faces that appear on public web pages. If a profile photo is AI-generated, it usually has no history. The same face will not appear on a LinkedIn page from 2018, in a tagged Facebook album, in a wedding announcement, or in a school yearbook scan. That absence is itself a signal. Real people who use the internet tend to leave a trail of reused images across forums, news mentions, employer pages, or old social profiles. A fabricated face often returns either zero meaningful matches or only matches on the exact platforms where the fake account was deployed.

This is why scam investigators increasingly use face search as a first filter. A romance-scam target who runs a suspect's photo through FaceCheck.ID and finds the same face attached to a different name on a stock-image site, or finds nothing at all despite the person claiming a long professional history, has learned something useful even without a positive identification.

Visual tells in synthetic faces

Diffusion models and GAN-based generators have known weak points. These artifacts show up less in newer models, but they are still common enough to flag:

- Earrings that do not match between left and right ears

- Background text that dissolves into nonsense letters

- Glasses frames that change thickness or fuse into the face

- Teeth with irregular spacing or impossible alignment

- Hair strands that merge into the background

- Skin that looks airbrushed and uniformly lit regardless of pose

- Symmetry that is too perfect, or eyes that focus at slightly different distances

Older GAN portraits also tended to center the eyes at fixed pixel coordinates, which is a giveaway when several "different" people from the same scam network share suspiciously similar framing.

How synthetic images interact with face-match confidence

Face-recognition systems compare embeddings, which are numerical descriptions of facial features. Two unrelated AI-generated faces can sometimes cluster closer to each other than two photos of the same real person taken years apart. This is partly because models are trained on average human features and partly because synthetic outputs lack the asymmetries and aging cues that distinguish real individuals.

For someone reading FaceCheck.ID results, this has two implications. First, a synthetic face may produce weak, scattered matches across unrelated profiles, which can look misleadingly like a match. Second, scam networks that reuse the same generated face across many fake accounts will produce strong, clustered matches across dating apps, crypto-investment pitches, and fake recruiter profiles. That clustering is often the actual fraud signature.

Common contexts where AI-generated faces appear

- Romance and investment scam profiles on Tinder, Hinge, Bumble, and Instagram

- Fake LinkedIn recruiters used in job-offer phishing

- Sock-puppet accounts amplifying political content

- Fabricated "team" photos on shell-company websites

- Synthetic witnesses, experts, or testimonials in fake reviews

- Fake escort or adult-service ads built around stolen identities mixed with generated faces

What synthetic-face detection cannot prove

Identifying a face as AI-generated does not prove fraud by itself. Some people use generated avatars for legitimate privacy reasons, including journalists, activists, abuse survivors, and ordinary users who do not want their real face on a dating app. A synthetic profile picture is a reason to ask more questions, not a verdict.

The reverse is also true. A real photo on a profile does not prove the account is honest. Stolen photos of real people remain the most common form of catfishing, and face search is more effective at catching that pattern than at catching well-made synthetic faces with no online history. Treat AI-generation status as one input among several, alongside match consistency, profile age, language patterns, and the specific claims the account is making.

FAQ

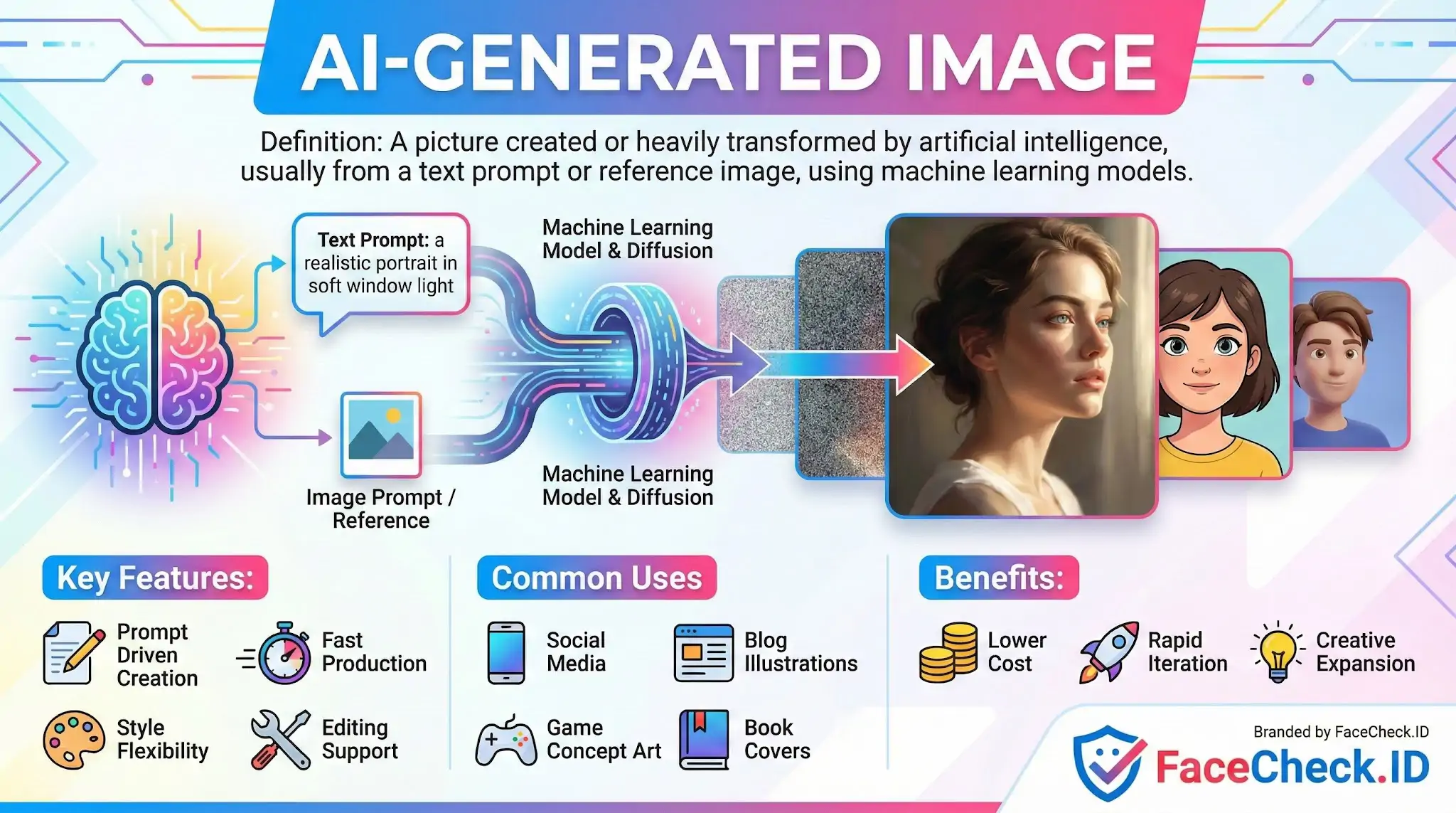

What is an AI-generated image in the context of face recognition search engines?

An AI-generated image is a synthetic picture created by a generative model (for example, a diffusion model or GAN) rather than captured by a camera. In face recognition search, an AI-generated face can look realistic but may represent a person who does not exist, or it may blend features from multiple real people—both of which can complicate how search engines interpret “who” the face belongs to.

Can an AI-generated face produce matches to real people in a face search engine?

Yes. Even if a face is fully synthetic, its facial geometry can still resemble real people closely enough that a face search engine may return similar-looking individuals (near matches). This does not prove the synthetic image depicts a real person; it often means the generated face landed near real faces in “face embedding” space, so results should be treated as leads and verified with additional context (source pages, timestamps, consistent identity signals).

How do AI-generated or heavily AI-edited portraits affect face-search reliability and false-match risk?

AI generation and AI “beautification” edits can smooth skin, alter eye shape, change facial proportions, or introduce artifacts that shift a face’s embedding. That can increase false positives (matching the wrong person), reduce true positives (missing the same person), and cause unstable results across searches. The risk is higher when the image is stylized, over-smoothed, low-resolution, or includes exaggerated features common in AI portraits.

What practical checks can help me spot an AI-generated image before relying on face search results?

Use a combination of checks: (1) zoom in for artifacts (asymmetric earrings, strange teeth, messy hair edges, inconsistent eyeglass frames, warped text/logos), (2) look for inconsistent lighting/shadows and unnatural skin texture, (3) review metadata and the upload history on the source page, (4) compare multiple images of the same claimed person for consistent facial details (moles, ear shape, teeth spacing), and (5) run both face search and traditional reverse image search to see whether there is a real-world posting trail. If the image appears only in newly created profiles or repeated across unrelated accounts, treat it as high-risk.

If I upload an AI-generated image to a tool like FaceCheck.ID, how should I interpret the results safely?

Assume results may be “similar face” leads rather than the same person. Prioritize verification steps: open each source page, check whether multiple independent sources consistently connect to the same identity, and confirm with non-face evidence (usernames, cross-posted bios, location consistency, older posts, and corroborating links). If results look scattered across unrelated people, treat the query image as potentially synthetic or heavily edited and avoid concluding that any returned person is the creator, owner, or “real identity” behind the AI-generated face.

Recommended Posts Related to ai-generated-image

-

Celebrity Romance Scams 2026: How Scammers Use AI Deepfakes and Stolen Photos to Steal Millions

Warning: Romance scammers are using AI-generated images, deepfakes, and celebrity impersonations to target victims in 2026.

-

How to Spot a Catfish Online in Under 60 Seconds with FaceCheck.ID

FaceCheck.ID is purpose-built for identity verification: advanced facial recognition that handles real-world image variations, combined with integrated AI-generated image detection to flag synthetic faces instantly.

-

How to Spot a Catfish in 2025: Red Flags in Fake Dating Profiles

Detect stolen or AI-generated images.

-

PimEyes Review: We Ran 16 Face Search Tests and Scored Every One

FaceCheck.id (5/5): Found similar AI-generated images and raised a red flag: "AI-Generated Face" to alert the user.

-

TinEye Review: We Tested It 16 Times and It Failed 10

FaceCheck.id found similar AI-generated images and flagged it as "AI-Generated Face.".