Deepfake Detector

A deepfake detector is a system that flags AI-generated or manipulated media before it gets mistaken for a real person. For anyone using face search to confirm an identity, these tools matter because a convincing deepfake can poison the chain of evidence: a synthetic profile photo can spread across dating sites, scam accounts, and fake LinkedIn pages, and a reverse image search will dutifully match the fake against itself.

How deepfake detection intersects with face search

Face search engines index real faces from public web pages. When someone generates a synthetic face using a tool like StyleGAN or a diffusion model, that image may still get scraped, reposted, and indexed alongside genuine photos. A reverse face search on a romance scam profile, for example, often surfaces a cluster of dating sites, Instagram accounts, and Telegram channels all using the same AI-generated portrait. The matches are real in the sense that the image appears on those pages. The face is not.

A deepfake detector helps separate two questions face search cannot answer on its own:

- Is this a real person whose photos have been reused elsewhere?

- Is this a synthetic face that was never attached to a real human?

Without that distinction, investigators can waste hours chasing a person who does not exist.

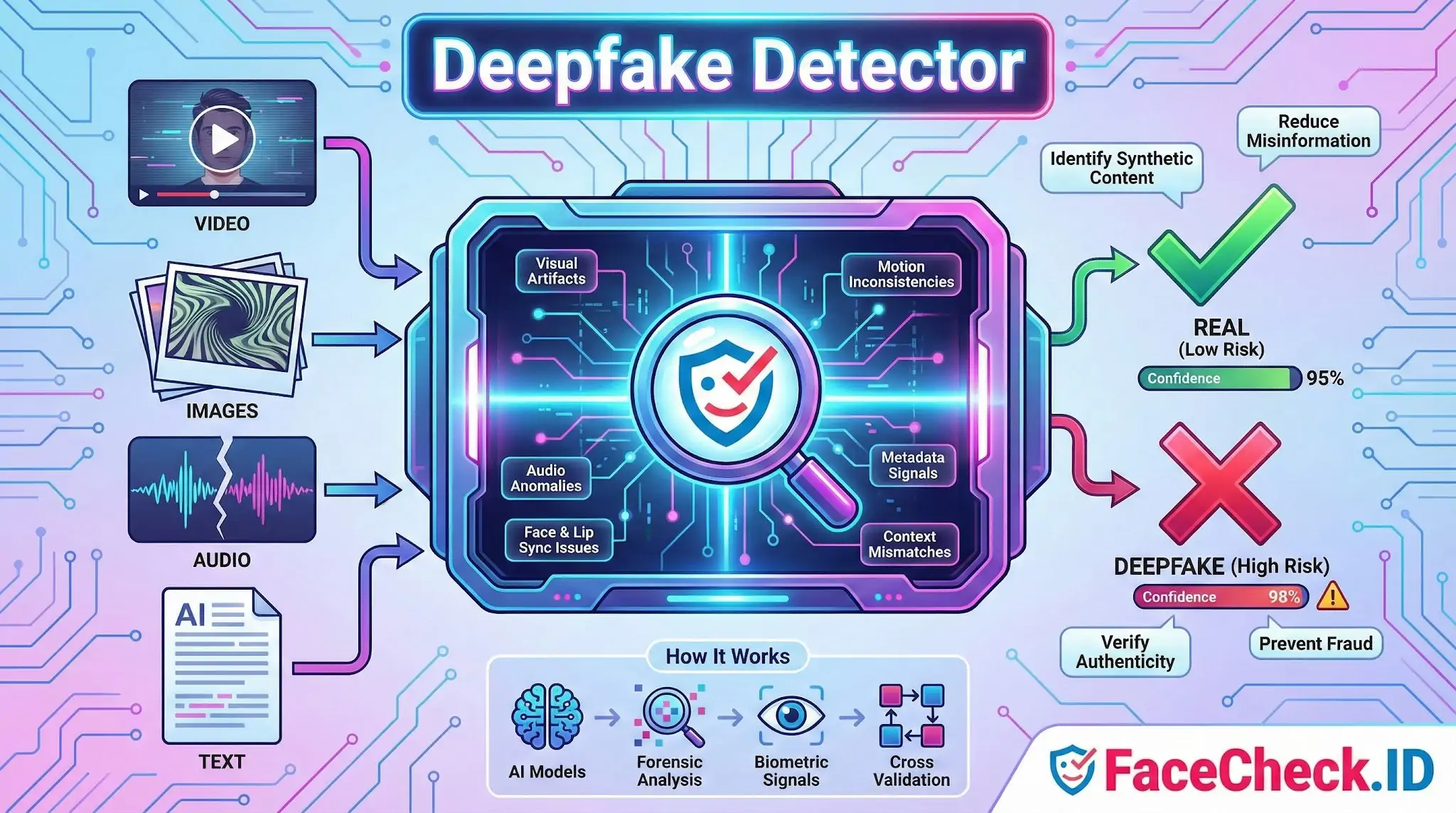

What detectors actually look for in face imagery

Modern detectors run classifier models trained on real and synthetic faces, and they tend to focus on artifacts that betray the generation process:

- Symmetry quirks in eyes, earrings, and glasses, since GANs often render mismatched pairs

- Inconsistent specular highlights in the pupils, which rarely line up correctly in synthetic faces

- Hairline edges and stray strands that blend unnaturally into the background

- Teeth that lack individual definition or repeat in pattern

- Skin texture that looks too smooth or too uniformly noisy

- Background warping where straight lines bend near the subject

For face-swapped video, detectors also examine temporal signals: blink rate, head pose stability, and the seam where a swapped face meets the original neck and jawline. Diffusion-generated faces from tools like Midjourney or newer models defeat many older detectors because the artifacts that flagged earlier GAN outputs no longer apply.

Where detectors help in face-search investigations

The practical use cases for someone working with face-search results:

- Confirming whether a profile photo on a dating app is a real person before treating any matches as identity leads

- Vetting executive headshots circulated in business email compromise attempts

- Checking suspect images attached to mugshot reposts, missing-person notices, or news articles

- Triaging clusters of accounts that all use variations of the same face, a common pattern in catfishing rings

- Validating the source image before running it through a face search at all, since uploading a deepfake will only return matches to other copies of the deepfake

When a detector flags an image as likely synthetic and a face search returns matches only on low-quality forums, escort directories, or recently created social profiles, the combined signal is strong evidence of fabricated identity.

Limits of deepfake detection in identity work

Detectors produce probabilities, not verdicts. A 78 percent synthetic score does not mean the photo is fake, and a low score does not mean it is real. Compression from re-uploading, screenshot capture, and platform resizing degrades the artifacts detectors rely on. A deepfake that passes through three rounds of Instagram compression often scores closer to authentic than the original generated file.

Detectors also struggle with hybrid manipulations: a real photo with an AI-swapped face, an authentic face pasted onto a generated body, or a real image lightly retouched with AI tools. Face search matches across these images can produce contradictory signals, where parts of the picture trace to a real person and other parts do not.

A detector result should sit alongside other evidence: the age of the accounts where the face appears, whether matches predate the profile in question, EXIF data when available, and whether the same face shows up in unrelated contexts that would be hard to fabricate. Treating any single tool, including a face search engine or a deepfake detector, as proof of identity is how investigations go wrong.

FAQ

What is a Deepfake Detector in the context of face recognition search engines?

A Deepfake Detector is a set of techniques or tools used to assess whether a face image (or a video frame) may have been AI-generated, face-swapped, or heavily manipulated. In face recognition search engines, it’s used as a safety layer to flag inputs or results that could trigger misleading matches, such as linking a synthetic face to real people or connecting a real person to synthetic content.

Why is deepfake detection useful before running a face recognition search?

If the image is synthetic or face-swapped, a face search may return convincing-but-wrong “same person” hits, because the embedding can resemble multiple real identities. Detecting deepfake indicators first helps you treat matches as lower-confidence leads, avoid false accusations, and decide whether to search using a different (more reliable) photo.

What kinds of deepfake or manipulation issues can distort face-search results?

Common distortions include face swaps, AI “beauty” edits, GAN/ diffusion-generated faces, heavy filters, aggressive retouching, and frames from low-quality or compressed videos. These can change skin texture, eye/teeth details, facial proportions, or add artifacts—sometimes making different people look more alike to the model and increasing wrong-person matches.

If I suspect a photo is a deepfake, what should I upload to a face recognition search engine instead?

Use a clean, high-resolution, front-facing photo from a credible source when possible (e.g., an original profile photo rather than a repost, meme, or screenshot). If your only source is a video, extract multiple frames (neutral expression, good lighting, minimal motion blur) and compare whether the search results are consistent across frames—deepfake-driven frames often produce unstable or contradictory hit patterns.

How should I use FaceCheck.ID results when deepfakes are a possibility?

Treat FaceCheck.ID (or any face search engine) results as investigative leads, not proof of identity, especially if the input could be manipulated. Cross-check top hits by opening the source pages, looking for independent corroboration (same person across multiple unrelated sites, consistent context, timestamps), and verifying non-face cues (tattoos, scars, clothing, event/location context). If results cluster around unrelated identities or switch dramatically when you change frames/photos, that’s a warning sign to suspect synthetic or altered media.

Recommended Posts Related to deepfake-detector

-

How to Spot a Catfish in 2025: Red Flags in Fake Dating Profiles

AI deepfake detection: AI-powered tools can now scan profile images and video calls to detect manipulation. The future of safe online dating may rely on crowd reporting + blockchain verification + AI deepfake detection working together.

-

Yilong Ma: Elon Musk's Doppelgänger or a Deepfake Masterpiece?

Deepware's scanner uses advanced AI models to detect signs of digital manipulation in videos, making it a reliable source for deepfake detection. While Deepware's AI models are sophisticated, no deepfake detection system is infallible.

-

How to Spot a Catfish Online in Under 60 Seconds with FaceCheck.ID

AI/Deepfake Detection. How accurate is the AI/deepfake detection?

-

Find & Remove Deepfake Porn of Yourself: Updated 2025 Guide

Stay informed on new protections: laws and tech are evolving, so follow news about deepfake detection, watermarking, and new regulations; organizations listed above often announce new initiatives.