North Korea IT Worker Scheme

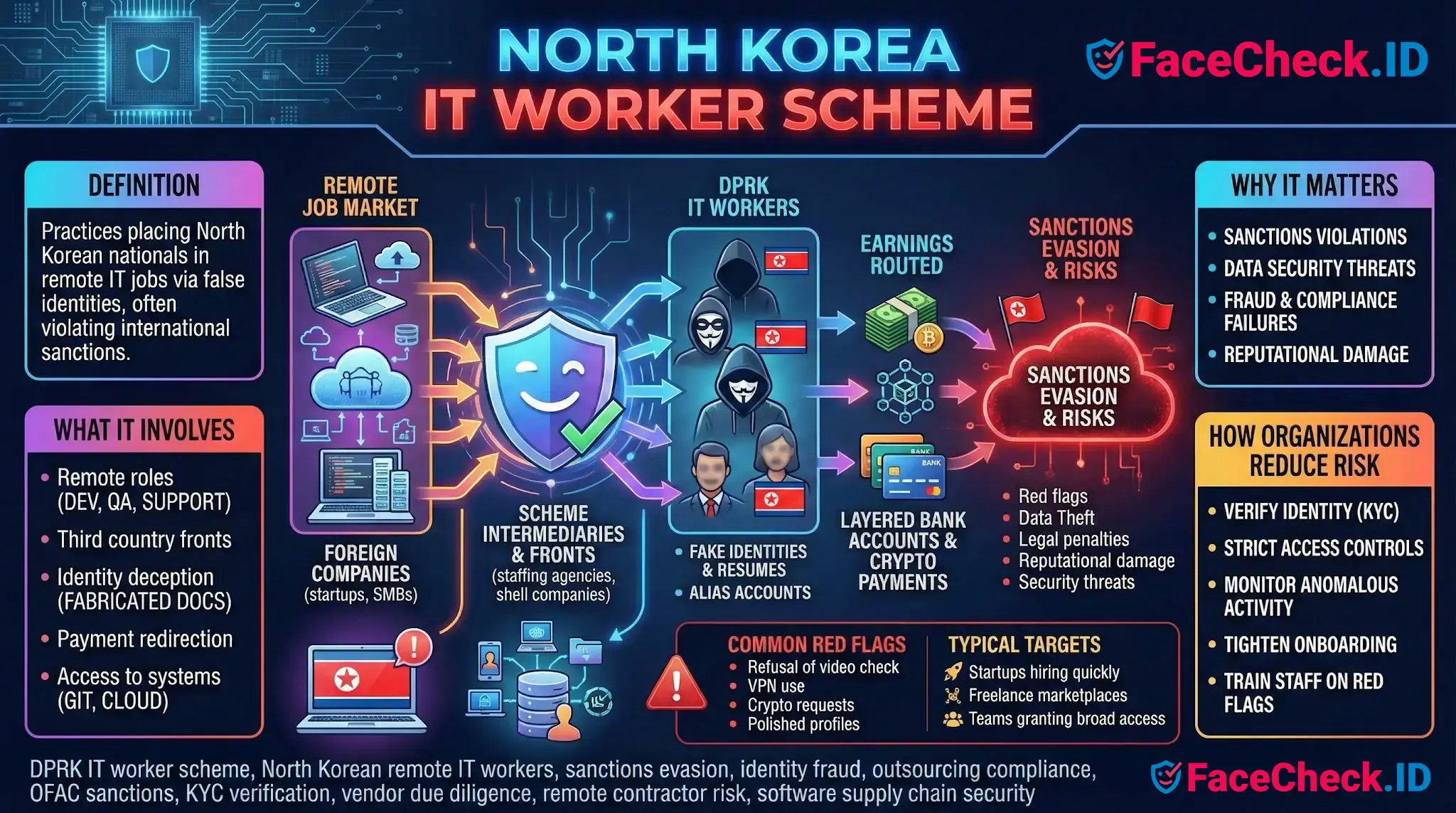

When recruiters screen remote engineering candidates, the photo on a resume or LinkedIn profile is often the only visual anchor they have. The North Korea IT worker scheme exploits exactly that weakness, pairing stolen or borrowed identities with stock-style headshots to place DPRK nationals into Western tech jobs. Reverse face search has become one of the few practical tools for catching these placements before they reach payroll and production systems.

How the scheme uses identity and imagery

Operators behind these placements rarely show their real faces. Instead, they assemble a working identity from parts: a stolen or rented US identity for tax paperwork, a fabricated resume, and a profile photo pulled from somewhere on the public web. The photo might be a real person whose LinkedIn was scraped, an AI-generated face, or a headshot lifted from a smaller agency website that recruiters are unlikely to recognize.

When the same image is reused across GitHub, LinkedIn, Upwork, and a personal portfolio site, a face search can surface that reuse pattern quickly. Common indicators include:

- A profile photo that also appears on an unrelated personal blog, dating site, or stock-photo source

- Multiple developer profiles under different names sharing the same face

- A face that matches an Instagram or Facebook account whose location, language, and timeline contradict the candidate's claimed background

- Headshots that produce zero matches anywhere, which can signal AI generation or a tightly controlled fake persona

Where face search fits in candidate vetting

Recruiters and security teams increasingly run reverse face searches as a pre-hire check for remote roles, especially when video interviews are declined, brief, or visually inconsistent. The goal is not to identify the candidate with certainty but to test whether the photo on file is consistent with a single, traceable online identity.

A clean LinkedIn headshot that also appears on conference websites, a university page, and prior employer bios produces a coherent identity trail. A photo that only appears on freshly created profiles, or appears under several different names across freelance platforms, is a much weaker signal. In documented DPRK cases, investigators have found the same face attached to different aliases across job boards, sometimes across multiple staffing agencies that did not realize they were submitting the same person.

Operational patterns that face search alone cannot catch but often pair with image red flags:

- Live video that never quite syncs with audio, or where the candidate refuses to share a clean front-facing view

- Shipping addresses that route to known laptop farms in the US Midwest

- Payment requests redirected to crypto wallets or third-party accounts after onboarding

- Code commits timed to UTC+8 or UTC+9 hours regardless of the claimed location

Limits of face matching in this context

Face search results are evidence, not proof. A photo that appears nowhere online does not confirm fraud, since many real engineers keep a low public profile. A photo that appears on multiple sites does not confirm legitimacy either, because operators sometimes seed a fake identity across several platforms before applying. Lookalikes, family resemblance, and AI-generated faces that share features with real people all produce noisy results that need human review.

Face matching also cannot tell you who is sitting at the keyboard once a contractor is hired. A candidate can pass a video interview and then hand the laptop to someone else. For sensitive roles, image-based vetting needs to sit alongside live identity verification, payroll and banking checks, sanctions screening, and ongoing monitoring of access patterns.

The useful framing is narrow: a reverse face search helps confirm whether the visual identity attached to a candidate is consistent with a real, traceable person on the public web. It does not by itself determine nationality, intent, or sanctions exposure. Those questions still belong to legal, compliance, and security teams, with face-search findings feeding the investigation rather than closing it.

FAQ

What does the term “North Korea IT Worker Scheme” refer to in the context of face recognition search engines?

In this context, “North Korea IT Worker Scheme” is typically used to describe suspected remote-work fraud where an individual’s real identity, location, or employment eligibility may be misrepresented (including by using stolen, borrowed, or synthetic profile photos). Face recognition search engines are sometimes used as a screening aid to check whether the same face appears across unrelated profiles, aliases, or scam/fraud-report pages—without treating any match as proof.

How can a face recognition search engine help evaluate whether a job candidate photo might be stolen or reused across fake identities?

A face search can surface where the same face appears online, which may reveal patterns consistent with photo misuse: the identical face tied to multiple names, conflicting biographies, or reused headshots across many accounts. Tools such as FaceCheck.ID (and similar open-web face search engines) can provide leads to investigate, but you should corroborate with non-biometric checks (work history validation, references, domain email verification, live technical interview) before drawing conclusions.

What face-search result patterns should be treated as higher-risk signals in “North Korea IT worker scheme” discussions (without assuming guilt)?

Higher-risk signals are usually about inconsistency and reuse, not nationality: (1) one face matched to many different names/usernames across platforms; (2) the same headshot appearing on “for hire” profiles with incompatible skills, time zones, or employment histories; (3) repeated use of identical or near-identical profile photos across multiple newly created accounts; (4) matches that lead to obvious impersonation reports or takedown posts. Any of these can also have benign explanations (reposts, modeling portfolios, scraped images), so treat them as prompts for verification.

What are the main limitations of using face recognition search to investigate a suspected “North Korea IT Worker Scheme” profile?

Face search is not identity proof and can be wrong due to look-alikes, low-quality images, heavy edits/filters, AI-generated faces, or outdated indexed pages. It also may miss matches if the person’s images are not publicly accessible or not indexed. Use results as investigative leads, document uncertainty, and avoid making employment decisions based solely on a face-match result.

If a face-search tool returns matches suggesting photo reuse in a hiring workflow, what is a safer, practical next step sequence?

A safer workflow is: (1) Save links/screenshots of the relevant matched pages and note dates/context; (2) Look for consistency across independent signals (same name, same work history, same public portfolio) rather than relying on face similarity alone; (3) Perform a live video verification step appropriate to your policy (e.g., real-time interview, role-specific technical screen) and verify employment eligibility through standard HR/legal processes; (4) If impersonation is likely, request clarification from the candidate and escalate to internal security/HR for policy-based handling; (5) If your own company’s or employee’s images are being misused, pursue takedowns/impersonation reports on the hosting platforms.

Recommended Posts Related to north-korea-it-worker-scheme

-

How to Detect Fake Remote IT Workers with Facial Recognition (2026 Guide)

Nov 14, 2025 — United States DOJ announces indictments in major North Korean IT-worker scheme.