Perceptual Hash

When FaceCheck.ID crawls and indexes faces from across the public web, the same photo often appears in many places: a profile picture reused on five dating apps, a stolen headshot recompressed by a scam ring, a news photo cropped for a thumbnail. Perceptual hashing is one of the techniques that makes it possible to recognize these as variants of the same image rather than treating each copy as something new.

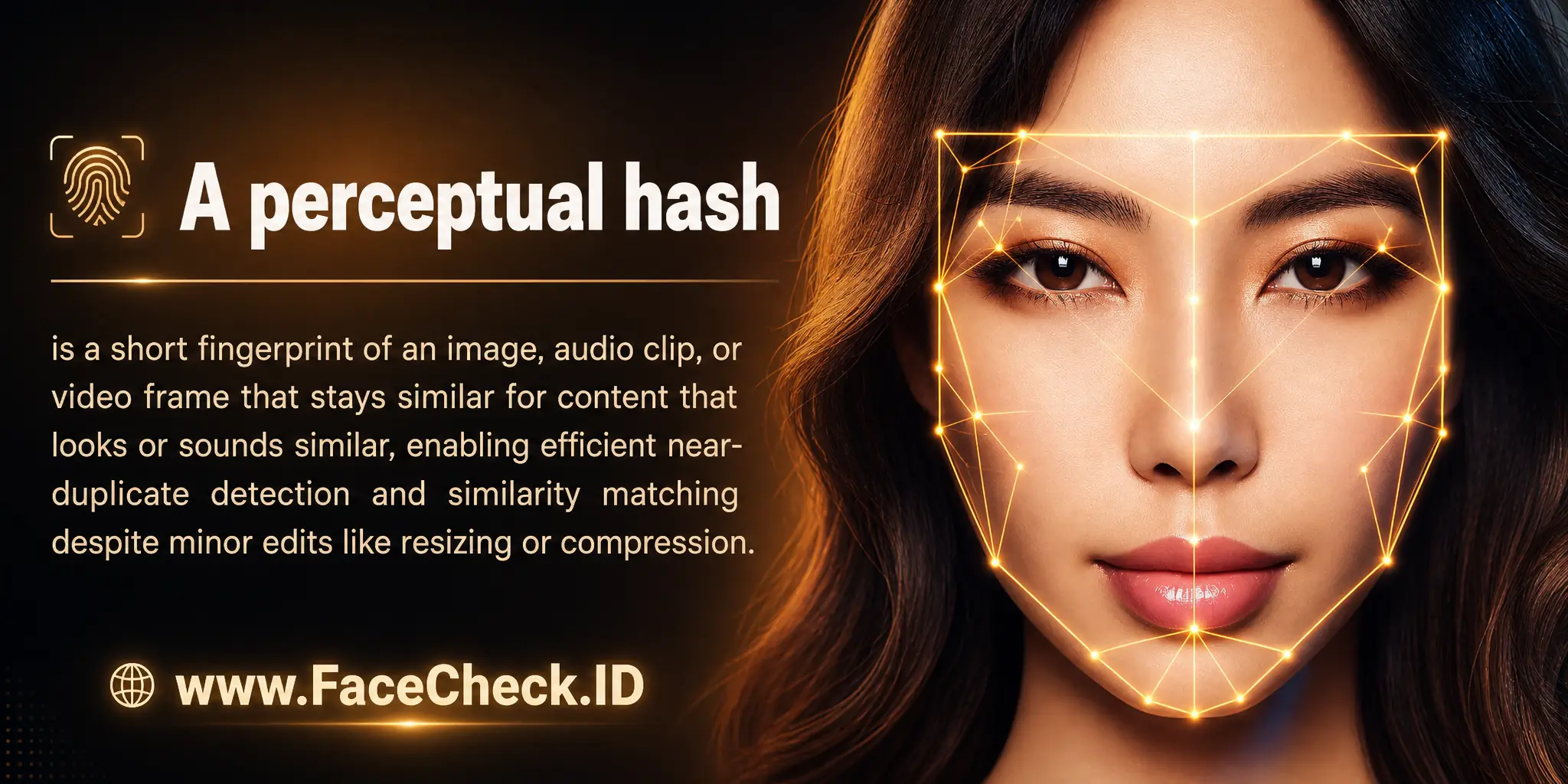

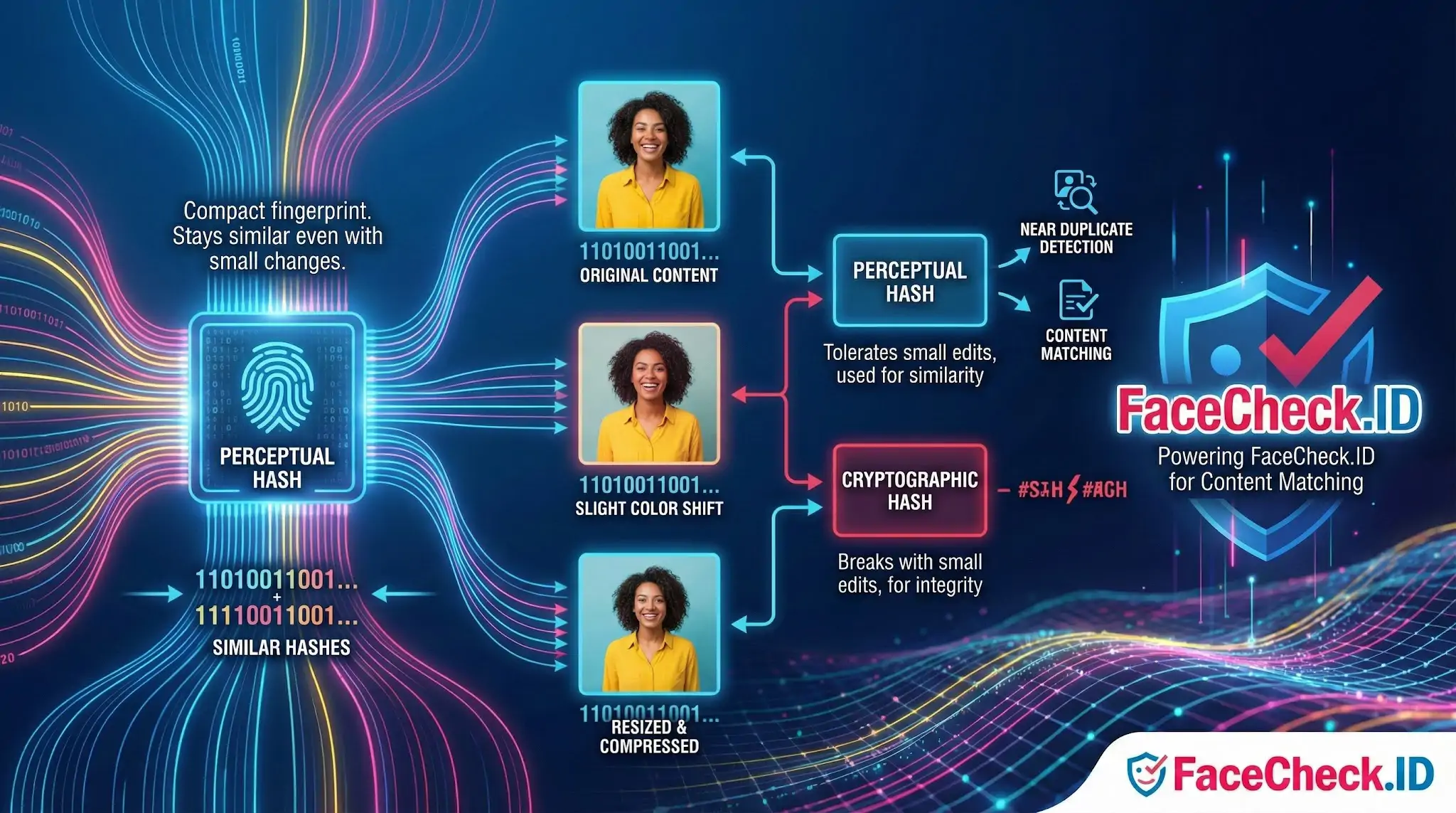

A perceptual hash is a short fingerprint of an image that stays similar when the picture looks similar. Resize a photo, recompress it as JPEG, shave a few pixels off the edge, or shift the colors slightly, and the hash barely changes. That tolerance is what separates it from cryptographic hashes like MD5 or SHA-256, where flipping a single pixel produces a completely different output.

How perceptual hashing supports face search

A reverse face search engine does two different jobs. First, it has to recognize that a face in one photo is the same person as a face in another photo, even when the angle, expression, or lighting differs. That is the job of a face recognition model, which produces a face embedding rather than a perceptual hash. Second, the engine has to manage billions of indexed images efficiently, and that is where perceptual hashes earn their place.

Typical uses inside an image-search pipeline include:

- Collapsing exact and near-duplicate photos so the same image scraped from twelve mirror sites does not show up as twelve separate matches

- Detecting reposted content where a photo has been resized for a thumbnail, watermarked, or saved at lower quality

- Linking a profile picture on one platform to the identical picture on another, even when one copy has been recompressed

- Filtering crawler results to avoid reindexing pages whose images have not meaningfully changed

The hash itself is usually 64 bits, computed by normalizing the image to a small grayscale version, extracting a structural signature (averages, gradients, or DCT coefficients), and encoding the result as bits. Two hashes are compared by Hamming distance, which counts how many bits differ. Small distance, similar image.

Perceptual hash versus face embedding

These two concepts often get confused, but they answer different questions.

A perceptual hash answers: is this the same image, possibly modified? It looks at pixels, brightness, and structure. Two different photos of the same person, taken minutes apart, will produce very different perceptual hashes.

A face embedding answers: is this the same person? It is a vector produced by a neural network trained on faces, and it stays close even across different photos, ages, hairstyles, or lighting.

In a face-search system, perceptual hashing handles deduplication and image-level matching, while face embeddings handle identity-level matching. A scammer who reuses a stolen model headshot across ten fake profiles will trigger both: identical perceptual hashes confirm it is literally the same JPEG, and the face embedding confirms it is the same face.

Common algorithms and where they fit

- aHash (average hash): compares each pixel to the image average. Fast, but easily fooled by lighting changes.

- dHash (difference hash): encodes brightness gradients between adjacent pixels. Holds up well against resizing and compression.

- pHash (DCT-based): focuses on low-frequency components from a discrete cosine transform. More resistant to compression artifacts and minor edits.

- Wavelet hash: captures multi-scale structure, useful for richer similarity tasks.

For investigative work, dHash and pHash are usually the practical defaults.

What perceptual hashing cannot tell you

A matching perceptual hash is strong evidence that two image files share the same source, but it is not proof of identity, ownership, or intent. A photo can be hashed identically across a real LinkedIn profile and a romance scam profile, and the hash alone does not tell you which came first or who actually owns the face.

The technique also breaks down under heavier edits. Tight crops to the face, rotation, mirroring, large overlays, deepfake retouching, and intentional adversarial noise can all push the Hamming distance past any reasonable threshold. Two photos of the same person taken seconds apart will not match by perceptual hash at all, because the pixels are different even if the face is the same.

That is why face-search results should be read as leads. A perceptual-hash match means the same image file is circulating. A face-embedding match means the same face is appearing. Confirming who that person actually is, and whether a given account is legitimate, still requires human judgment and corroborating evidence.

FAQ

What is a perceptual hash (pHash) in a face recognition search engine?

A perceptual hash (often called pHash) is a compact “fingerprint” of an image that stays similar when the image is resized, slightly compressed, mildly cropped, or color-adjusted. In face-recognition search pipelines, pHash is typically used for fast near-duplicate image detection (e.g., finding the same photo reuploaded in different qualities), while face embeddings handle “same person across different photos.”

How is a perceptual hash different from a face embedding used for face search?

A perceptual hash summarizes the overall visual appearance of an image (or sometimes a cropped face image) and is best at spotting near-duplicate files. A face embedding (face vector) is a biometric-style representation learned by a neural network to capture identity-related facial features, making it better for matching the same person across different photos, angles, lighting, and expressions.

What kinds of edits can break or weaken perceptual-hash matching in face-search workflows?

Perceptual hashes are usually tolerant of small changes (recompression, small resizes, slight color/contrast changes), but can fail when edits are large: heavy cropping (especially changing the face region), strong filters/beauty edits, major rotations, big overlays (text/watermarks), collages, or replacing backgrounds with aggressive AI edits. These changes can make two images of the same person look “far apart” in pHash space even if face embeddings would still match.

Why would a face recognition search engine use perceptual hashing at all if it already has face embeddings?

Perceptual hashing is computationally cheap and great for housekeeping tasks: deduplicating crawled images, grouping near-identical reposts, detecting the same photo in different sizes, and avoiding repeated processing of the same content. Face embeddings are more powerful for identity-style matching, but they’re typically more expensive to compute and compare at scale.

How might FaceCheck.ID (or similar tools) benefit from perceptual hashing in practice?

In a face-search tool, perceptual hashing can help cluster near-duplicate results (the same photo reposted across pages), reduce spammy repeats, and speed up indexing by skipping already-seen images. That can make results easier to review—while the actual “same person” matching is still primarily driven by face-recognition embeddings rather than pHash alone.

Recommended Posts Related to perceptual-hash

-

AI Face Search: How It Works and Best Tools (2026)

Google Images uses perceptual hashing and object recognition to find visually similar images.

-

Face Recognition Systems: How They Work, Best Open Source Models, and Production APIs

Perceptual hashing (pHash, dHash) helps but isn't perfect. You need deduplication at multiple levels: URL-level dedup (trivial), perceptual hash dedup (catches resized and recompressed copies), and embedding-level dedup (catches the same face photographed from slightly different angles).