Synthetic Identity: Spotting Fake Faces

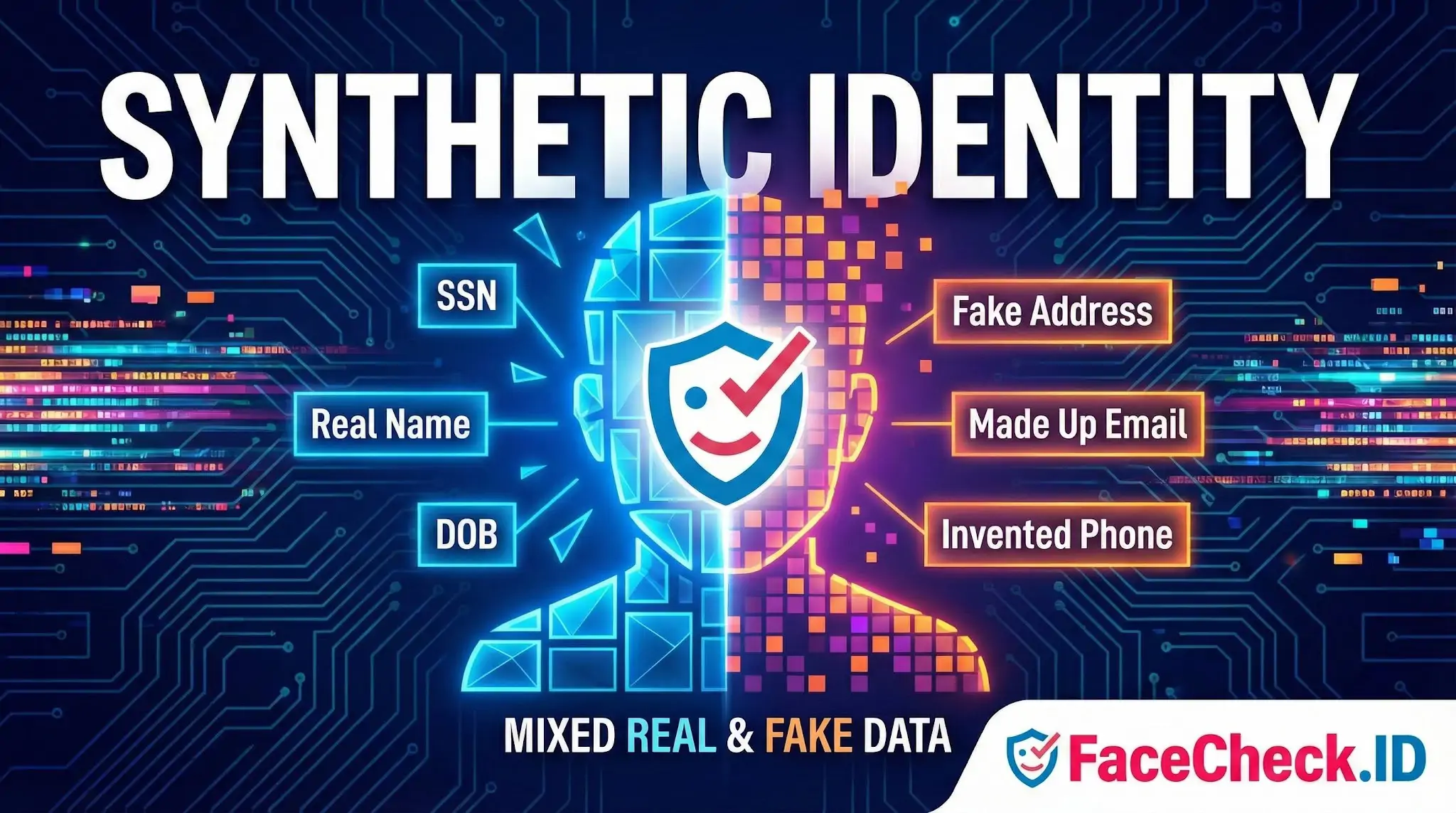

A synthetic identity is a fabricated person built from a mix of real and invented data, designed to pass identity checks and accumulate a believable history online. For anyone running a face search, synthetic identities are one of the harder problems to spot, because the photos attached to them are often real human faces stolen, generated, or repurposed from somewhere else on the web.

How synthetic identities show up in face search

When someone builds a synthetic identity, they almost always need a face to go with it. That face has to come from somewhere, and reverse face search is one of the few tools that can expose where. The patterns tend to fall into a few categories:

- A real person's photo lifted from a public profile, often a low-follower Instagram, an old MySpace account, or a regional dating site, then reattached to a fake name on a different platform

- A stock or modeling photo reused across many "people," which a face search will surface as the same face appearing on multiple unrelated sites

- AI-generated faces from tools like StyleGAN or modern diffusion models, which often produce no matches at all because the face has no prior public history

- Lightly edited photos, such as cropped, mirrored, color-shifted, or aged versions of a real source image, used to evade exact-image hash matching

A face search can flag the first three patterns quickly. The fourth depends on how robust the underlying matcher is to small geometric and color changes.

Why face matching matters for synthetic identity detection

Traditional fraud controls look at SSNs, addresses, devices, and credit behavior. They rarely look at the photo itself, which is often the weakest link in the fake identity. A synthetic identity using a stolen face leaves a trail of public images that predate the fraud account by years. That trail is hard to fake.

Concrete signals that face search adds to the picture include:

- The same face appearing under multiple names across LinkedIn, dating sites, and crypto-related Telegram groups

- A "new" person whose face traces back to a real profile in a different country or language

- A profile photo with no reverse-image footprint at all, which can suggest either a private individual or an AI-generated face

- Romance-scam images that reappear on scam-report aggregators and stolen-photo databases

For investigators, KYC analysts, and individuals vetting someone they met online, this kind of cross-site face evidence is often more revealing than document checks. A forged passport can match a fake name. A face cannot easily be detached from its public history.

Generated faces and the limits of reverse search

AI-generated faces complicate things. A face produced by a generative model has no source image on the public web, so a reverse face search returns nothing. This is sometimes mistaken for a clean result. It is closer to the opposite. A working-age adult with zero face matches anywhere, no tagged photos, no archived profiles, no event coverage, is itself a weak signal that the identity may be synthetic.

Some tells that often accompany generated faces:

- Asymmetric earrings, glasses, or backgrounds that are slightly warped

- A perfectly centered, front-facing pose with neutral lighting

- No second photo of the same person ever appearing online

- Inconsistent micro-features between two supposed photos of "the same" individual

Face search cannot definitively label a face as AI-generated, but the absence of any web footprint, combined with these visual cues, is a meaningful flag.

What face search can and cannot prove

A reverse face search can show that a photo used by a synthetic identity also appears on a real, older, unrelated profile. That is strong circumstantial evidence of misuse. It does not, on its own, prove fraud. The matched person could be the same individual under a different handle, a sibling, a lookalike, or someone whose photo was stolen without their knowledge.

Conversely, a clean search result does not prove an identity is real. It may mean the face is generated, the source images are behind logins, or the match index has not yet crawled the relevant sites. Synthetic identity detection works best when face evidence is combined with device data, behavioral signals, and document verification rather than treated as a standalone verdict.

FAQ

What is a “Synthetic Identity” in the context of face recognition search engines?

In face recognition search engines, a “Synthetic Identity” typically refers to a fabricated or blended persona where the “person” presented online is not a real, single individual—often created using AI-generated faces, face swaps, heavy editing, or by combining details from multiple real people. The goal is usually to look authentic enough to pass casual scrutiny while being hard to trace to a real person.

What face-search result patterns can suggest a Synthetic Identity (without proving it)?

Common warning patterns include: (1) the same face appearing across many unrelated names/usernames, (2) matches that cluster around “stock-photo-like” portraits or identical studio-style headshots on different sites, (3) inconsistent ages/ethnic cues/face shape across top matches, and (4) many near-matches that look extremely similar but never resolve to a consistent real-world footprint (e.g., no credible long-term accounts, friends, or history). These patterns are signals to investigate further—not proof.

Why can a Synthetic Identity produce confusing or mixed matches in face recognition search?

Synthetic identities often use AI-generated or heavily edited images that can sit “between” many real faces in feature space, leading to multiple plausible near-matches. Face swaps and beautification filters can also shift key facial landmarks and skin texture cues, so the search engine may return a blend of look-alikes, edited variants, or different people who share similar facial structure.

How can I use a face recognition search engine to check whether a profile photo might be synthetic or stolen?

Use multiple photos from the same profile (not just one) and compare whether results converge on the same real person/source. Open several high-ranking results and look for consistency: the same name, timeline, location, and long-running presence. If results scatter across unrelated identities, treat it as higher risk and corroborate with non-face signals (reverse image search for exact duplicates, account history, cross-platform consistency, and direct verification steps appropriate to your situation).

How does FaceCheck.ID add value when investigating a possible Synthetic Identity?

Tools like FaceCheck.ID can help by finding where a face (or close variants of it) appears across the web, which can reveal reuse patterns—such as the same face tied to multiple identities, repeated across scam/report pages, or circulating as a “template” image. The safest approach is to treat any FaceCheck.ID match as an investigative lead: verify each source page, prioritize original uploads over reposts/screenshots, and avoid concluding identity from a face match alone.

Recommended Posts Related to synthetic-identity

-

How to Spot a Catfish in 2025: Red Flags in Fake Dating Profiles

In 2025, the rise of AI, deepfakes, and synthetic identities has made spotting a catfish more critical than ever.

-

How to Spot a Catfish Online in Under 60 Seconds with FaceCheck.ID

These synthetic identities have no genuine online footprint, making them nearly impossible to trace with conventional reverse image search tools.

-

How to Detect Fake Remote IT Workers with Facial Recognition (2026 Guide)

Stolen or synthetic identities.