Pig Butchering: Spotting Stolen Photos

Pig butchering scams almost always begin with a stolen face. Before any money is discussed, the scammer has built a fake identity using photos lifted from real people, often models, military personnel, doctors, or expats with active social profiles. Reverse face search is one of the few tools that can break the illusion early, because it can show the same face appearing under a different name on Instagram, LinkedIn, or a news article years before the scammer claimed that identity.

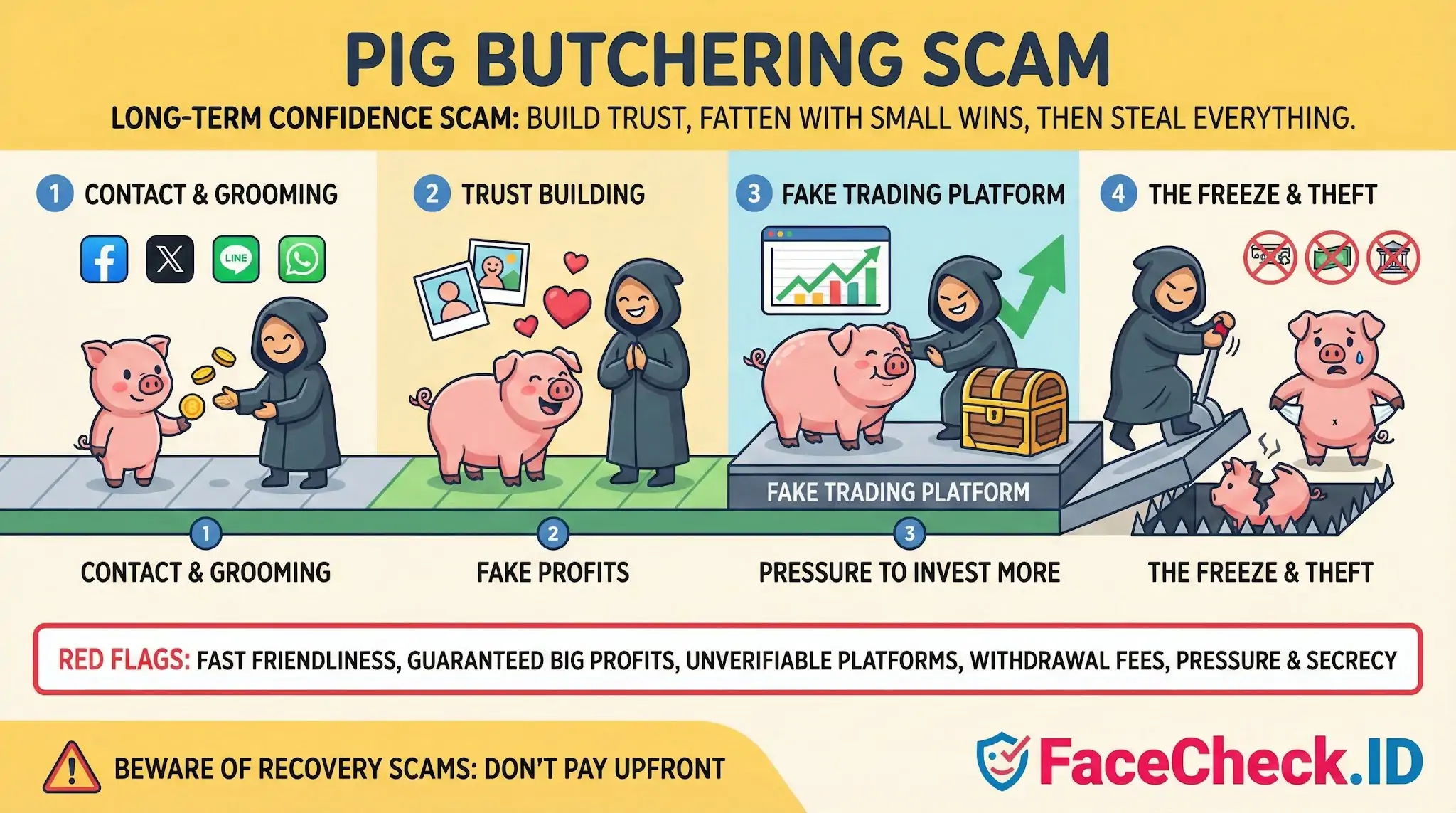

How stolen photos power the long con

The scam, also called sha zhu pan, runs on emotional grooming. A stranger sends a wrong number text or matches on a dating app, moves the chat to WhatsApp or Telegram, and spends weeks building rapport before introducing a crypto or forex platform. The believability of that whole arc depends on the photos. Victims report that they trusted the relationship because the person sent dozens of selfies, video clips, and lifestyle shots that looked like a coherent life.

In practice those images come from a small set of sources:

- Public Instagram or TikTok accounts of attractive strangers, scraped in bulk

- LinkedIn headshots of executives or doctors, used to fake a wealthy mentor persona

- Military deployment photos pulled from Facebook, used in romance variants

- Asian model photo sets resold across scam networks, often paired with names like a finance professional in Singapore or Hong Kong

When a face appears across multiple unrelated identities or surfaces on scam-warning forums, that is a strong signal the persona is fabricated.

Where face search fits into investigation

Running the photos through a reverse face search is one of the fastest sanity checks before sending money or continuing a relationship. Useful patterns to look for:

- The same face on a real, long-standing social profile under a different name and country

- Matches on scam awareness sites, romance scam reporting forums, or watchdog blogs

- Modeling agency portfolios, stock-style image sets, or influencer pages that explain why the photos look so polished

- Old archived posts that predate the persona's claimed history

Face search will not always produce a match. Scam rings increasingly use lightly edited photos, AI-generated faces, or live video deepfakes during short calls to defeat basic checks. A clean search result does not confirm the person is real. It only means the specific images did not surface elsewhere in the indexed web.

Red flags that pair with face evidence

Face search results matter most when read alongside behavioral signs. The combination is what gives a confident read.

- A new contact pushes the chat to Telegram or WhatsApp within days

- Photos look professionally lit, magazine-style, or oddly inconsistent in background

- The person refuses live video, or only joins calls with the camera angled away

- They introduce a trading platform, mentor, or "uncle" with insider tips

- A small withdrawal works, then larger deposits get blocked behind tax or verification fees

- They coach you on what to tell your bank

If a reverse face search returns a different name, a victim's report, or a stock-photo source, treat the entire investment pitch as fraudulent regardless of how the conversation has felt.

What face search cannot prove

A face match is evidence about an image, not a verdict on a person. The real owner of the photos is almost always an innocent third party whose pictures were stolen. Finding their authentic profile does not tell you who is actually messaging you, where the operation is based, or whether your money can be recovered. Face search also struggles with cropped images, heavy filters, and AI-generated composites that share no exact pixels with any indexed photo.

For pig butchering victims, image evidence is most useful for two things: stopping the scam earlier, and documenting the fake persona for reports to banks, exchanges, and cybercrime units. Recovery still depends on speed of reporting, not on identifying the face behind the chat.

FAQ

What does “Pig Butchering” mean in the context of face recognition search engines?

“Pig butchering” is a long-con scam where criminals build trust (often via dating, investing, or job approaches) and then pressure the victim into sending money or crypto. In the context of face recognition search engines, the term usually comes up because scammers often use stolen or synthetic profile photos; face search can help check whether the same face appears across many unrelated profiles or websites.

How can a face recognition search engine help detect pig-butchering scams using stolen photos?

A face search can surface where the same face (or very similar faces) appears online—such as the same image reused across multiple names, locations, or platforms, or an older “original” source that contradicts the story you’re being told. Results are best treated as investigative leads: look for consistent identity clues across multiple independent pages (same name, long-term history, consistent context), not just one strong-looking match.

What face-search result patterns are common red flags for a pig-butchering scam?

Common red flags include: (1) the same face linked to multiple different names/usernames, (2) the same headshot appearing across many “dating/investment” contexts, (3) matches that point to stock-photo-like portfolios or unrelated persona pages, (4) many reposts/screenshots with no clear original account, and (5) results that show a mix of near-matches suggesting heavy filters, face editing, or AI-generated/face-swapped imagery.

If FaceCheck.ID (or another tool) returns matches, does that prove someone is running a pig-butchering scam?

No. Matches do not prove criminal intent, and a match can also be a look-alike, a repost, or a mislabeled page. Treat any face-search hit—including FaceCheck.ID results—as a starting point for verification: compare multiple photos from the same person, check whether the matched pages have a long-running consistent identity trail, and use non-face signals (consistent handles, prior posts, friends/followers, timestamps, and corroboration on multiple platforms).

What’s a safe, practical workflow to use face recognition search results when you suspect pig butchering?

Use a careful sequence: (1) run face searches on several different photos the person provided (not just one), (2) prioritize higher-quality, front-facing, unfiltered images to reduce false matches, (3) open top results and verify context (does the page show the same person, name, and timeline?), (4) cross-check with reverse image search for exact duplicates, and (5) decide actions based on independent verification (e.g., video call + consistency checks), not on face-search similarity alone. If results suggest photo theft, stop sending money, preserve evidence (screenshots/links), and report through the platform and relevant financial/consumer fraud channels.

Recommended Posts Related to pig-butchering

-

Pig Butchering Crypto Scam Exposed: Fake Rich Friend Uses Deepfakes & Stolen Photos to Steal Billions

Last year, Americans lost a staggering $9.3 billion to cryptocurrency scams (FBI IC3 Report), with crypto investment fraud, including pig butchering schemes, driving $5.8 billion in losses, a 47% increase year-over-year. This undercover investigation exposes how pig butchering works: A fake "wealthy sister" steals photos of popular Chinese influencer Yangyang Sweet, flaunts luxury, builds unbreakable trust, then lures victims into bogus crypto platforms. Top 5 Pig Butchering Scam Red Flags.

-

How to Stay Scam-Proof in 2026: Defending Yourself From GhostGPT and the New Wave of AI Cybercrime

Pig butchering — the industry's grim term for the long-romance-into-crypto-investment combo — has exploded for exactly this reason. What is "pig butchering" and why is it suddenly everywhere?

-

Unmasking Romance Scams: Expert Tips to Identify and Avoid Falling Victim

Men are disproportionately hit by "pig butchering" crypto romance schemes, while women see more medical-emergency and military-deployment scripts. What's the difference between a romance scam and pig butchering?

-

The New Face of Digital Deception: FraudGPT, Romance Scams, and Protecting Yourself in 2026

FraudGPT is also a driving force behind "pig butchering" (yes, that's really what the industry calls it), a highly sophisticated long-term scam that blends romantic relationship-building with investment fraud.