The New Face of Digital Deception: FraudGPT, Romance Scams, and Protecting Yourself in 2026

"On the Internet, nobody knows you're a dog." Peter Steiner, The New Yorker, 1993

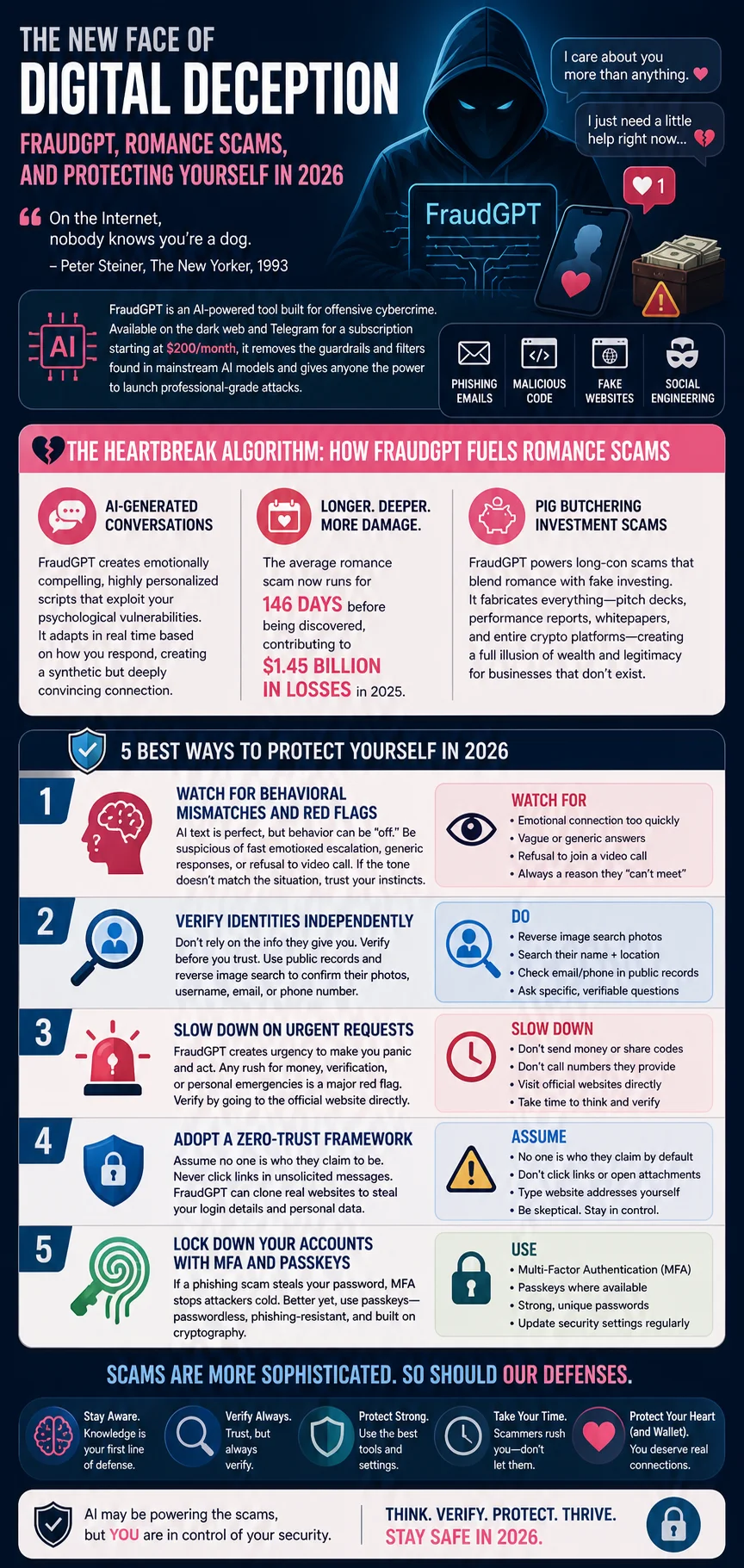

The era of identifying scams by their awkward phrasing and obvious typos is over; today's scams have, regrettably, gone to college. Cybercrime has entered a new frontier with the emergence of FraudGPT, an AI-powered tool built explicitly for offensive cybercrime that operates without the safety guardrails or content filters found in mainstream AI models like ChatGPT. Available on the dark web and Telegram for a subscription fee starting at $200 per month (because cybercrime, of course, has gone SaaS), FraudGPT acts as a comprehensive toolkit that empowers even completely inexperienced scammers to launch professional-grade attacks.

While FraudGPT is widely used to generate flawless phishing emails, malicious code, and fake websites, its application in social engineering—particularly romance scams—is one of its most devastating uses.

In this article, we're going to discuss

The Heartbreak Algorithm: How FraudGPT Fuels Romance Scams

Traditionally, romance scammers relied on manually copying and pasting scripts, which limited their scale and often felt disjointed. Today, FraudGPT is used to generate emotionally compelling conversational scripts that are tailored to exploit the specific psychological vulnerabilities of a target.

What makes FraudGPT so dangerous is its ability to adapt. Based on how a victim responds, the AI generates follow-up messages that feel highly personal, empathetic, and genuine. This engineered authenticity creates a synthetic but deeply convincing emotional connection. Because the interaction feels so real, the average romance scam now runs for 146 days before being discovered, contributing to a staggering $1.45 billion in losses in 2025.

FraudGPT is also a driving force behind "pig butchering" (yes, that's really what the industry calls it), a highly sophisticated long-term scam that blends romantic relationship-building with investment fraud. In these schemes, scammers spend months grooming their victims. FraudGPT not only generates the conversational scripts used to build trust, but it can also fabricate the entire illusion of wealth and legitimacy by generating fake pitch decks, investment performance reports, and comprehensive whitepapers for fake cryptocurrency platforms — essentially a full investor-relations apparatus for businesses that don't exist.

Best Ways to Protect Yourself from Scams in 2026

Because FraudGPT produces flawless text in any language and personalizes its messaging using scraped data, traditional scam-spotting advice is no longer effective. To protect yourself in 2026, you must rely on behavioral verification and robust security practices.

1. Watch for Behavioral Mismatches and Red Flags

AI-generated text is grammatically perfect — a compliment the AI would appreciate, if it weren't trying to drain your bank account. But it can sometimes feel behaviorally "off" or incongruent with a normal human interaction. In the context of online dating, be highly suspicious of an emotional connection that escalates unusually fast, responses that feel slightly generic, or a persistent refusal by the other person to join a video call. Trust your instincts if the tone of a conversation doesn't quite match the situation.

2. Verify Identities Independently

Romance and investment scams depend entirely on you placing absolute trust in an individual you have never verified in person. Before allowing a relationship to deepen or discussing finances, take steps to verify the person's identity. Run their profile photo, username, email, or phone number through public records or reverse image search platforms to confirm that the person behind the screen is actually who they claim to be.

3. Slow Down on Urgent Requests

FraudGPT excels at crafting high-pressure messages designed to make you panic and act before you think. Any sudden urgency surrounding money, account verification, or a supposed personal crisis is a massive red flag. Never use the contact information provided in a suspicious message; instead, slow down and verify the request by navigating directly to an organization's official website.

4. Adopt a Zero-Trust Framework

Assume that no one is who they say they are by default, whether the message is an email, a text, or a chat on a dating app. Never click on links in unsolicited messages, as FraudGPT can easily build visually identical clones of legitimate bank or delivery websites to steal your information.

5. Lock Down Your Accounts with MFA and Passkeys

If a sophisticated phishing scam does manage to trick you into handing over your password, Multi-Factor Authentication (MFA) is your strongest technical defense, as it blocks the attacker from using your credentials without the second verification factor. Where possible, transition to passwordless authentication methods, such as passkeys, which rely on cryptography to make your credentials entirely unphishable — the closest thing the security industry has to a phishing antidote.

Frequently Asked Questions

How do I actually do a reverse image search on someone's profile photo?

Here's the catch most people miss: Google Images and Bing aren't doing facial recognition — they match the exact image. If a scammer uses a photo that hasn't been shared anywhere else online, those tools come up empty even when the face belongs to a real person whose pictures are all over the internet under their actual name. For actual facial recognition that finds different photos of the same person across the web, use FaceCheck.ID. Save the profile photo, upload it, and you'll see whether the same face turns up under a different name on a real LinkedIn account, an old Facebook profile, or a model's portfolio. One pattern worth knowing: scammers disproportionately steal photos of military personnel and doctors — those jobs conveniently explain why the person can never meet in person.

I think I'm in a romance scam right now — how can I tell for sure?

Four signs, and three out of four is a scam: you've never met in person despite months of conversation; video calls keep getting "postponed" by technical problems; "I love you" arrived unusually early; and money has come up, either a crisis they need help with or an investment opportunity they want to share. Stop sending money, save the messages (you'll need them to report it), and don't announce you've figured it out. Scammers often try to extract one last "emergency" payment when they sense the end.

Can deepfakes fool a video call now? Is seeing someone on camera still proof?

Less than it used to be, but not nothing. Live face-swap tech has gotten good, but it still breaks in predictable places: ask the person to turn fully sideways (profiles trip up most models), wave a hand slowly in front of their face (the swap lags), or hold up a specific number of fingers against their cheek. A real person does these effortlessly. A scammer running a filter usually cuts the call.

I've already sent money. Can I get any of it back?

Honestly, probably not — but report it anyway. Wire transfers and cryptocurrency are usually gone within hours. Gift cards are gone the moment the codes are read aloud. Credit-card charges can sometimes be reversed. File with the FBI's IC3 (ic3.gov), the FTC (reportfraud.ftc.gov), and your bank immediately, and ask the payment platform (Venmo, Cash App, Zelle) to freeze the receiving account. Reports occasionally lead to recoveries months later, and they always help build the cases that eventually catch operators.

Someone I love is in a romance scam and won't listen to me. What do I do?

Don't lead with "you're being scammed" — that puts you on the same side as the people they think are trying to keep them and their partner apart. Ask questions instead: Have you met in person? Has the camera ever come on? Has any money been discussed? Most victims half-know already; questions let them get there on their own. If money is actively moving, contact their bank's fraud team or, in severe cases involving an older relative, Adult Protective Services. And brace for the aftermath — even once they accept it, many people grieve the relationship, because to them it was real. Be patient with that part.

Sources

- ‘FraudGPT’ Malicious Chatbot Now for Sale on Dark Web — Dark Reading

- AI Jailbreak — IBM Think

- FraudGPT and GenAI: How will fraudsters use AI next? — Alloy

- FraudGPT and the Future of Cyber crime: Proactive Strategies for Protection — Lookout

- FraudGPT: The Villain Avatar of ChatGPT — Netenrich

- Inside the AI Arms Race: How Cybercriminals Exploit Trusted Tools and Malicious GPTs — Abnormal AI

- Decoding the Threat Landscape: ChatGPT, FraudGPT, and WormGPT in Social Engineering Attacks — International Journal of Scientific Research in Computer Science, Engineering and Information Technology (IJSRCSEIT)

- New AI Tool ‘FraudGPT’ Emerges, Tailored for Sophisticated Attacks — The Hacker News

- The Rising Threat of AI-Powered Cybercrime: FraudGPT — WithSecure Cloud Protection for Salesforce

- Using AI to outsmart AI-driven phishing scams — Help Net Security

Read More on Search by Photo

How to Find and Remove Nude Deepfakes With FaceCheck.ID: A Step-by-Step Guide

Nude deepfakes can destroy your reputation, career, and mental health - often before you even know they exist. Learn how to find AI-generated explicit images of yourself hiding across the web and the exact steps to get them taken down fast.

Popular Topics

Deepfake Identity Image Search Facial Recognition Facebook Google Images LinkedIn Scammers Romance Scammers Romance Scam Online Dating Public Records Face Swap Phishing Social Engineering Pig ButcheringPimEyes vs FaceCheck: Comprehensive Review and Rankings 2026