Privacy Laws and Face Search

Privacy laws shape what a face-search engine like FaceCheck.ID can index, what users can do with results, and how images of real people are allowed to circulate online. They also define the boundary between legitimate identity verification, journalism, and investigation on one side, and stalking, harassment, or unauthorized biometric profiling on the other.

How privacy laws apply to face search

Reverse face search sits at the intersection of two regulated areas: biometric data and publicly posted images. Most modern privacy frameworks treat a face template, the mathematical representation extracted from a photo, as biometric or sensitive personal data. That places it in a stricter category than a name or email address.

The image itself is treated differently. A profile photo posted publicly on a forum, news article, or company About page is generally considered public content, but the act of running it through a face recognition system can trigger separate rules around biometric processing, automated decision-making, and consent. This is why the legal status of a face match often depends less on where the photo was found and more on what is done with it afterward.

Key areas where privacy laws affect face-search workflows:

- Lawful basis for processing biometric identifiers, often requiring explicit consent or a narrow exception such as fraud prevention, journalism, or law enforcement

- Right of access and deletion, letting people request removal of their face data from search indexes

- Restrictions on transferring biometric data across borders

- Obligations to disclose breaches involving facial templates

- Limits on using face matches to make automated decisions about a person

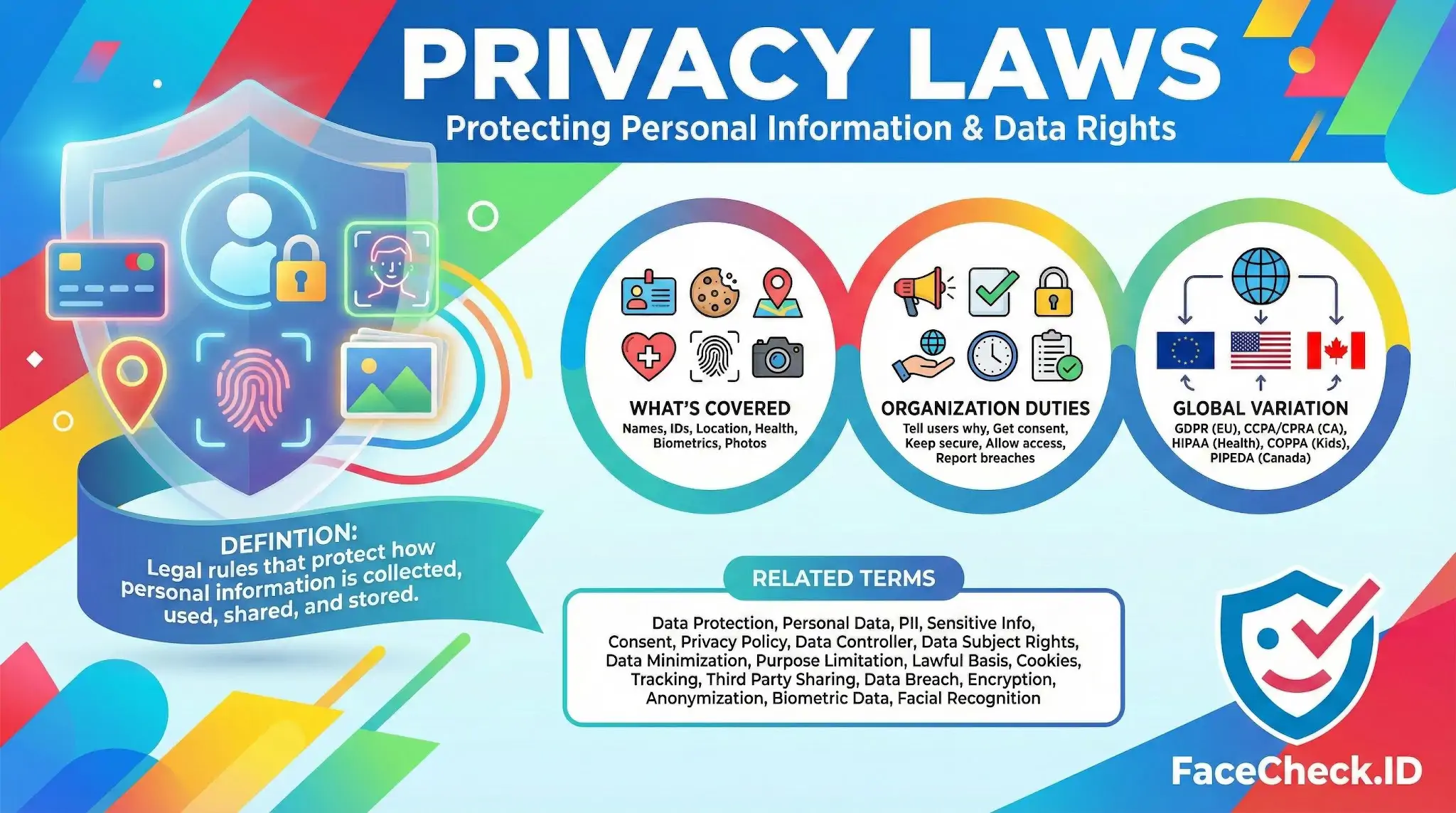

Major frameworks that shape face-recognition use

GDPR (EU and EEA). Article 9 classifies biometric data used for identification as a special category requiring explicit consent or another narrow legal basis. Several European regulators have fined face-search companies for scraping images without a lawful basis, even when those images were public.

Illinois BIPA. The Biometric Information Privacy Act has driven the largest face-recognition settlements in the United States. It requires written consent before collecting a face template and gives individuals a private right to sue.

CCPA and CPRA (California). Treat biometric information as sensitive personal information and grant rights to know, delete, and limit its use.

Texas CUBI and Washington's biometric law. State-level rules with consent and notice requirements, though without BIPA's private right of action.

HIPAA, COPPA, PIPEDA, LGPD, PIPL. Each adds sector-specific or country-specific rules that can apply when face data is tied to health records, minors, or cross-border processing.

What this means for interpreting face-match results

Privacy laws affect not just whether a match exists, but what an investigator, journalist, or private individual can do with it. A match found through reverse image search is a lead, not a verified identity, and many jurisdictions limit how such leads can be acted on.

Practical implications:

- Using a face match to deny employment, housing, or insurance can trigger automated decision-making rules

- Sharing a suspected match publicly can create defamation and privacy liability if the person is misidentified

- Storing scraped face images locally may itself constitute biometric processing under GDPR or BIPA

- Removal requests submitted by data subjects must usually be honored within fixed timelines

Limits and gray areas

Privacy laws do not prevent lookalikes, false positives, or stale photos from appearing in search results, and they do not validate that a match is the correct person. A confident face-search result still requires human review of context, such as account history, image metadata, and corroborating details.

Legal compliance also varies by user role. A consumer checking whether a dating profile photo appears elsewhere, a fraud team verifying a customer, and a journalist confirming a source operate under different exemptions and obligations. The same face match can be lawful in one context and prohibited in another.

Privacy law tells you what is allowed. It does not tell you whether the match is right. That judgment still belongs to the person reading the results.

FAQ

Which privacy laws commonly apply to face recognition search engines, and why does location matter?

Privacy rules differ by jurisdiction and can apply based on where the user is located, where the person in the photo lives, and where the service operates. Commonly relevant regimes include the EU/UK GDPR (and similar laws), U.S. state privacy laws (e.g., CCPA/CPRA in California), and biometric-specific laws in some places. Because obligations can change by geography (notice, consent, opt-out, retention, and data-subject rights), the same face search can be treated very differently depending on the countries/states involved.

Do privacy laws treat facial embeddings (faceprints) as biometric data, and what does that mean for compliance?

In many jurisdictions, facial templates/embeddings derived from images can be classified as biometric data (or “special category/sensitive” data) when used to identify or uniquely distinguish a person. That usually raises the compliance bar: stricter lawful-basis requirements, stronger security controls, clearer notices, limits on retention and sharing, and more robust user and data-subject rights handling.

Is it lawful to collect and index faces from public websites for a face search engine?

“Publicly accessible” does not automatically mean “free to process for biometric identification.” Some privacy laws still restrict scraping, repurposing, and biometric processing without appropriate legal grounds, transparency, and respect for opt-out/erasure rights. Legality often turns on factors like purpose, notice, consent requirements (where applicable), how the data was obtained, whether the processing is proportional, and whether the service honors removal requests.

What privacy-law duties usually apply to storing uploaded photos and search logs in face recognition search tools?

Many privacy frameworks require data minimization and purpose limitation (collect only what’s needed), clear retention limits (delete when no longer necessary), reasonable security safeguards, and user transparency about what is stored (uploaded photos, derived embeddings, IP addresses, timestamps, device identifiers, and query logs). Keeping uploads and logs longer than necessary, or using them for unrelated purposes, can increase legal risk and user harm.

How should users handle privacy-law risk when using a tool like FaceCheck.ID for face searches?

Use the service only for legitimate purposes and avoid uploading images that contain bystanders, minors, or sensitive context unless you have a strong legal and ethical basis. Prefer a tightly cropped face image you have the right to use, avoid re-sharing results, and treat matches as investigative leads rather than identity proof. Also check the provider’s privacy policy and removal/opt-out process (including FaceCheck.ID’s) so you understand what may be stored, for how long, and how to request deletion or delisting where available.

Recommended Posts Related to privacy-laws

-

Searching Instagram by Photo: A Guide to Finding People and Accounts

Remember, always respect privacy laws in your jurisdiction.

-

How to Search Arrest Records and Mugshots by a Photo of a Person

This could be due to new privacy laws or because law enforcement decides they are not an effective form of identification.

-

How to Spot a Catfish Online in Under 60 Seconds with FaceCheck.ID

Texas and Washington: Have biometric privacy laws primarily targeting companies. An attorney familiar with cybercrime and privacy law in your jurisdiction. Facial recognition technology and privacy laws vary by jurisdiction and evolve rapidly.

-

Top 6 Reverse Image Search Mobile Sites to Find People, Products, and Places

Respect privacy laws. Illinois (BIPA), Texas, and Washington have biometric privacy laws that have produced major lawsuits against facial recognition companies.

-

How to Find and Remove Nude Deepfakes With FaceCheck.ID

- Compliance with privacy regulations – FaceCheck.ID adheres to strict privacy laws and regulations to ensure that the data and images you upload are secure and protected.